Automated Reporting

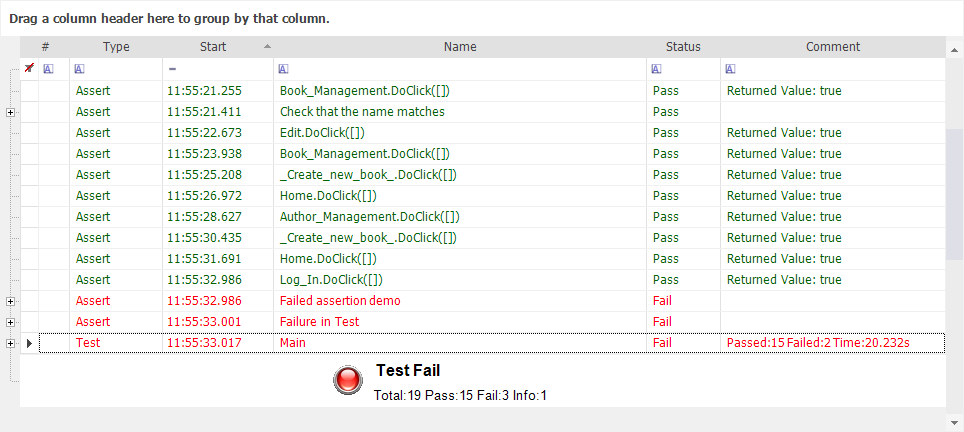

Each time you playback a test, Rapise automatically generates a report detailing the steps of the test, the data values used, and the outcome of each step:

The first row (with a white background) is used for Report Filtering. The rows below that each represent a step in the test. The rows with green text represent success; the rows with red text represent failure. You can reposition the columns by dragging and dropping the column names.

Key features of the Rapise reporting system:

- Detailed reporting that includes actions performed, verification points and errors found

- Customizable reporting with ability to add columns, rows and steps in script

- Multiple export formats including MS-Excel, Acrobat PDF, Perl TAP and XML.

- Ability to report back automated test executions to enterprise test management reporting tools

Writing to the Report

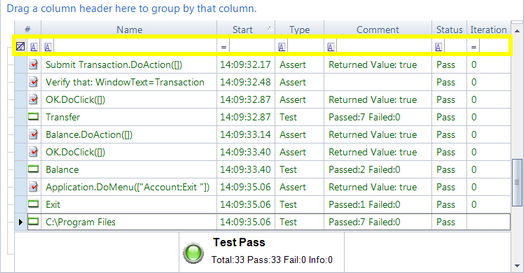

In addition to the standard report data, you can write to individual columns, create columns, and add data to the report by adding commands to your test script:

- You can add data by using verification points or by adding Tester.Assert commands

- You can add custom columns by using the PushReportAttribute and SetReportAttribute commands

Report Filtering

Report Filtering lets you specify criteria to filter your view of the test execution report. Rows that do not match your criteria are hidden.

You can filter the report view while the file is open. Directly above the first row of the report, there is a row of filter cells. Each one has a matching criteria button, a text-box to specify a filter value, a drop-down menu with predefined filter values, and a clear button.

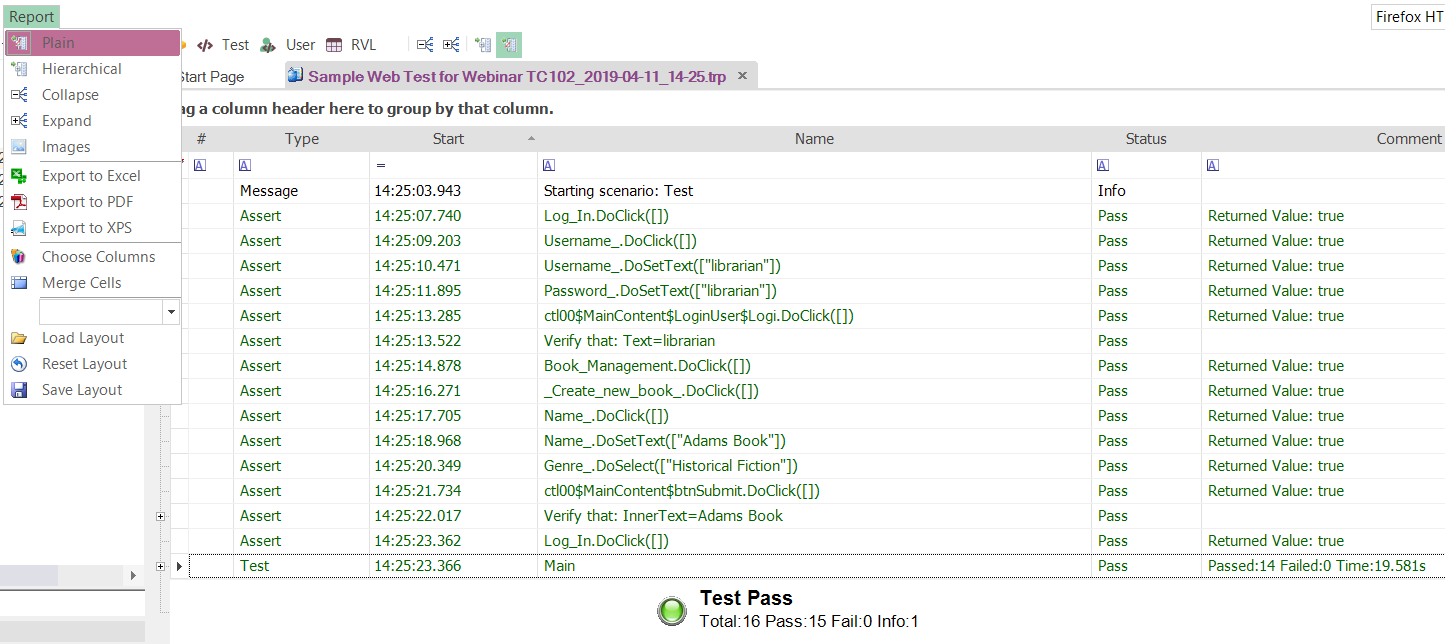

Export Options

The native format of the Rapise reports is XML with an open XML schema that can be programmatically parsed by other tools and systems. In addition Rapise can report in the lightweight plain text Perl TAP (Test Anything Protocol) format that is understood by many automation systems and build servers.

For management reporting, Rapise will let you quickly and easily export the formatted test reports into either Microsoft Excel, Microsoft XPS, or Adobe Acrobat PDF.

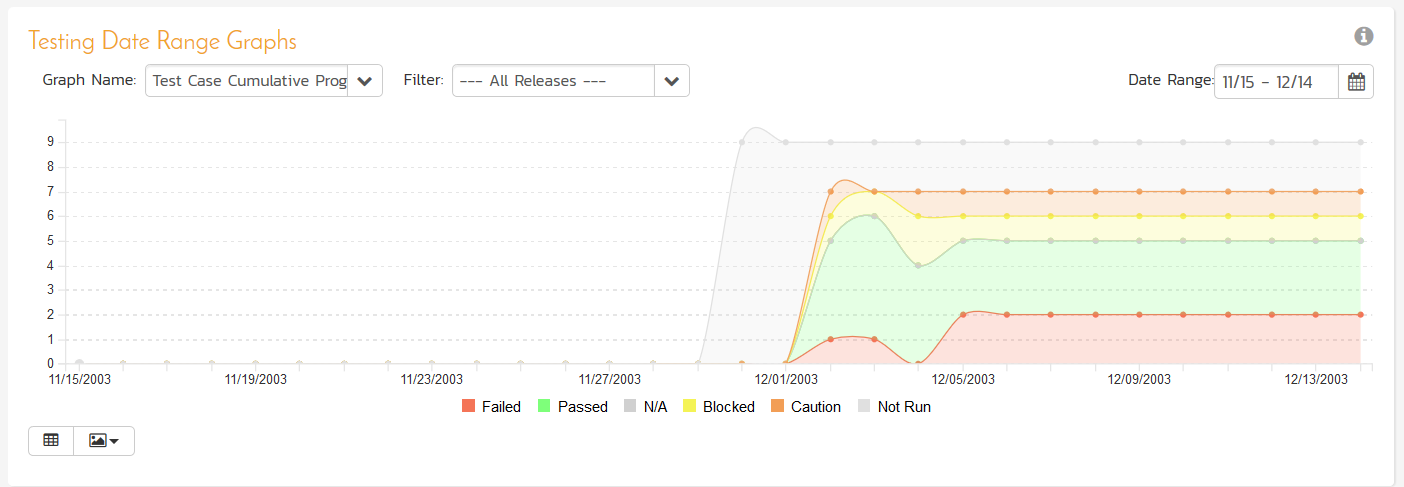

Test Management Reporting

Being able to report on individual test cases is just the start, when you use Rapise in conjunction with our SpiraTest test management system you can feed results from Rapise into your enterprise reporting of test metrics.

When you use Rapise and SpiraTest together you can view the results of individual test executions, track metrics of testing per release, per iteration/sprint and also analyze trends across different platforms and technology combinations to elucidate trends and patterns of failure.

Try Rapise free for 30 days, no credit cards, no contracts

Start My Free TrialAnd if you have any questions, please email or call us at +1 (202) 558-6885