Abstract

There are many possible root causes for serious IT project failure. The complexity of the software development process, whether it be a traditional waterfall-type model or an Agile approach, makes this inevitable.

We shall consider one type of serious error: inadvertent data loss during a software or system upgrade. We shall do this by examining some of the possible flaws in a specific government IT project, and in doing so, remind ourselves of some fundamental lessons to be learned from project failure.

Treating Data Loss Seriously

Say "Government IT failure" and most people today will think of Healthcare.gov, the web site supporting the Affordable Health Care Act. But here in the nation's capital, problems with the re-hosting of the Washington DC Library's on-line catalog have gone largely unnoticed. Which is remarkable when you consider that as part of this major upgrade, significant amounts of user data were lost.

Library users who spent many hours, perhaps over many years, saving their favorite titles for future reading enjoyment, suddenly found that after the upgrade, all their favorites were gone. Vanished. Not a trace! And this was no simple loss of hyperlinks to lists or temporary re-hosting of user information; no, the lists that had been carefully and painstakingly built up over time were forever erased. Favorites, records of books previously read and cherished, wish-lists short and long, all have been dumped into the Recycle Bin and permanently deleted.

If the DC Library system was a commercial venture, this astonishing outcome would have meant immeasurable damage to customer relations, a serious loss of business and potentially millions of dollars in compensation. It would have also been the perfect opportunity for competitors to move in and lure clients away; a library employee, having apologized for the inconvenience, suggested a website I could use as an alternative to the DC Library lists feature. Incidentally, her suggestion was a good one.

So, what went wrong? A library official could only say that it was an “unintended consequence” of the upgrade. Which is like saying “losing your data was not a requirement”. Can you imagine Amazon.com losing everyone's wish lists? “Oops!” doesn't quite cover it.

Although we don't know where the actual failure occurred, there must have been a series of fundamental errors that could have been avoided. Let's examine the most likely possibilities and see how they might have been prevented. To avoid spinning off into the realm of the absurd, we shall ignore highly unlikely options such as deliberate data loss or a complete disregard for testing and concentrate on more probable causes.

We shall consider the following theories:

- The requirements were inadequate, overlooking the need to migrate data;

- The requirements included data migration but they did not get conveyed properly to the contractor;

- The requirements for data migration were conveyed to the contractor but were lost in the development effort; and

- Data migration was implemented but did not function correctly.

Inadequate Requirements

Let's start by assuming the library IT department failed to include the requirement that existing user data be retained. Sadly, such needs are overlooked more often than we would like to admit. Analysts fail to see the big picture, spending all their time focused on the inadequacies of the old system and the desired operation of new system, but not the process of getting from one to the other.

To some extent, this is understandable; migration will only occur once for the entire system, whereas all the new functions will be used thousands of times each day so it seems natural to allocated effort accordingly. Further, users are likely to have spent all their time complaining about the existing system and in doing so, concentrated the attention of the analysts on those problems alone. Analysts may have held focus groups with users researching their current use of the system and its limitations. They may have written use cases for every conceivable action users might perform. They may have even presented users with prototypes of the new system and spent countless hours analyzing the way these users interacted with the proposed new system. Analysts are to be lauded for spending so much time with users, however, users rarely address data migration and for reasons already given, it could be argued that this function is the single most important requirement. Failure to retain user data is an invitation for all users to switch allegiance to another provider. Unless, like the library, you are the sole supplier.

In most cases, the omission of data migration from the requirements will often be discovered as the project progresses. Those who have worked on any commercial software system will immediately think about migration issues whenever an upgrade is suggested. It's instinctive; it comes as second nature. Those who don't, quickly get burned. And here is a real possibility: that the analysts responsible for this upgrade were simply inexperienced to the point that they had never before been through a software system upgrade. For them, it would have been the equivalent to the very first US satellite launch attempt; it crashed and burned.

Of course, there is one other possible reason the requirements did not specify data migration: it was decided that it was unnecessary. However, the comment from library sources say that the data loss was 'unintended', and for this article, we shall consider the source to be veracious.

Before moving on, we must briefly consider the possibility that the library IT staff was not in a position to manage such a large project so they contracted not only the development, but the requirements gathering. It is critical to recognize that when contracting development, the requirements must be gathered and specified by an independent authority, otherwise there is little consequence for the contractor should the requirements be deficient.

What have we learned so far?

- Understanding needs is not simply about gathering requirements from stakeholders. Experience of similar projects is critical and an excellent way to inject such experience is to review and reuse requirements from similar past projects.

- Do not let the fox specify the requirements for the hen house. Ensure some degree of autonomy for requirements analysts.

But what if the requirements did adequately state the need for data retention during the upgrade but were inadequately conveyed to the contractor?

The Need for Efficient Requirements Communication

The DC Library project was more complex because management of the on-line catalog was being transferred from one contractor to another.

It could be the case that the contract with the original provider failed to allow the existing data to be used by any new contractor. Such a possibility highlights the need to include experienced technical reviews of legal contracts with external suppliers.

Accepting that the data were available and that the need to retain it was recognized, could the requirement have been lost or become unrecognizable in the communication between library and contractor? The answer is, unfortunately, yes. Delivering requirements in an informal or unstructured way leaves the door open for dropped or mistranslated requirements and makes such occurrences harder to catch.

Many requirements are still written with tools such as Microsoft Word, Excel or OpenOffice products, or worse, left raw and vulnerable within the body of emails. Word processors provide some ability to highlight changes to requirements, but free flowing text and a lack of unique identifiers make it difficult to avoid ambiguity and misunderstanding. While MS-Excel offers better requirements identification and structure, it lacks the change tracking and readability of word processors. Neither offer the ability to create traceability when requirements need to be decomposed or related to tests. Relaying requirements 'naked' within emails is so prone to failure, we shall not address it other than to say this would have been sufficient to result in project failure all by itself.

Where requirements management (RM) is concerned, too many organizations still fail to recognize the need for a tool designed for the job. When it comes to using a commercial RM product, there are many to choose from, each with their pros and cons, but the bottom line is, any tool is better than no tool at all.

The DC library, were they not using a tool, should have looked for a product that supports formal requirements communication with the contractor. The simplest option would provide common access to all requirements data for their IT staff as well as the contractor, with permissions to control what each type of user can do. Another option would be a tool with a means to deliver requirements to the contractor and then return any changes or additions that were made such as decomposition and links to tests. The latter option is harder to find in commercial requirements products and, being usually more complicated to use, is better suited to large, complex, high-cost projects. In the case of the DC library, a simple, shared data system would have worked well.

What lessons have we learned here?

- Include technical reviews of contracts for IT services,

- Use tools suited to the task. Word processors are not RM tools.

- When using a contractor, make sure your RM system can support remote, or third party access.

If the requirements had been clearly and unambiguously conveyed to the contractor, then the failure lies somewhere in the implementation, which is where testing comes in.

Inadequate Testing

If the original requirements did not include the need for data migration then, of course, there would be no acceptance tests for that functionality (unless the test engineers realized the need independently, in which case the requirements would have been amended accordingly.) But if the requirement had been included, then how could the testing fail to pick up the fact that it had not been successfully implemented?

If we assume that the test engineers were capable of performing the tests correctly, the most likely explanation for test failure is that the acceptance tests themselves were inadequate. This can happen when test engineers misinterpret the requirements, or worse, they do not build the acceptance tests from the requirements but from another source such as the design

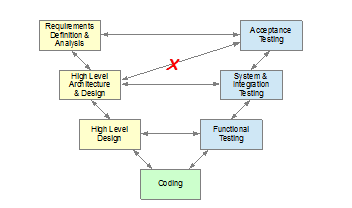

Look at the most common waterfall model and you will see that acceptance tests relate directly to requirements definition:

Illustration 1: Basic waterfall 'V' model

To test against anything other than the requirements would not be user acceptance testing at all. It’s all too easy a mistake to make, especially if the requirements are not easily accessible within the test environment.

The remedy is usually to provide easy access to the requirements and then to ensure that the requirements authors review and approve the tests, reciprocating the visibility between test engineers and requirements owners, and helping keep everyone on the same page. This idea alone is the reason why close tool integration between requirements management and test management is critical to project success. Note: it is important when transitioning to a less traditional development model, such as an Agile model, to not lose sight of the direct relationship between requirements and acceptance tests. The requirements may take on different forms, but there should still be tests against them, both intrinsic to the development and extrinsic, independent acceptance tests.

When tests are not created from the requirements, the test engineers are essentially deciding for themselves what the functionality of the deliverable should be; they are creating their own requirements. Put like that, it seems highly improbable that anyone would do this. However, there are too many test engineers out there properly creating tests directly from requirements but then not documenting the relationship between the two. How can we be sure that the tests come from the requirements unless such a relationship can be shown to exist? Traceability between requirements and tests is as essential as having the tests themselves, for without proof of full coverage the tests are seriously vulnerable.

Incidentally, if the test function is integrated too tightly with the project as a whole in Agile development, requirements can be misinterpreted in the same way for both design and acceptance tests, increasing the possibility that mistakes will not be discovered.

Finally, experience again, would tell us that testing against a 'sample' data set is inadequate. Acceptance testing must be performed against a copy of the real data if we are to have full confidence in the software's ability to deliver the goods.

What are the lessons to be learned from test failure?

- Build tests from requirements.

- Have requirements analysts review and approve acceptance tests.

- Formally record the relationships between requirements and tests (using a tool designed for the job.)

- Test against real data.

It is worth repeating that the relationship between requirements and tests is perhaps, the single most important relationship in software and systems development and to not treat it as such is one of the easiest routes to failure.

Final Thoughts

As we said at the start, many things can go wrong in any software development project, and unfortunately it only takes one error to cascade through the process and lead us to potentially catastrophic failure. In the case of the DC Library on-line catalog system, even data loss is not a matter of life-and-death and the failure was barely reported. Nevertheless, to the people who lose their data, it is seriously disappointing. None of us want our industry to be known by its failures and so it is incumbent on each of us to get it right; to do the right thing, follow the right procedures, use the right tools and speak up when we see opportunities to reduce to the possibility of failure.

Could the lessons learned here have improved the Healthcare.gov project? The answer is almost certainly, yes. More experience in defining the requirements would have helped; spending time considering requirements the users wouldn't think of; providing sufficient autonomy for requirements and test engineers; ensuring tests are based on actual needs rather than test engineers' creativity; testing against real data; and finally, using the proper tools for the job, especially for requirements management regardless of the development methodology.

MS-Word and Excel are registered trademarks of Microsoft Corporation.

All other trademarks are the property of their respective owners.