By Art Trevethan

Incorporating both Manual and Automated Methods in an Effective Test Plan

Boil the ocean, eat the elephant, climb the mountain – all things we must do bit by bit, bite by bite, step by step. Developing a comprehensive application test plan can have the same overwhelming aspect – the task appears to be just too huge.

The average test plan for a commercial grade application will have between 2,000 and 10,000 test cases. Your test team of five must manually execute and document results for between 400 and 2,000 test cases. And the scheduled release date of your product is fast approaching. No worries; clone your team and work around the clock. Or perhaps there’s a better way.

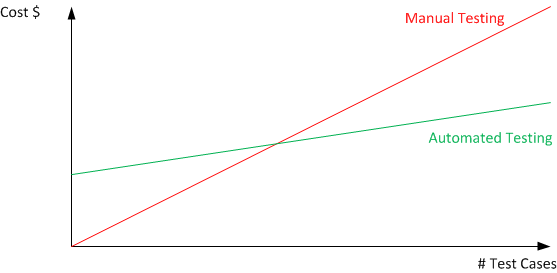

As the graph above illustrates, there is an upfront cost to automated testing (as opposed to purely manual testing), but as the number of test cases and builds increases, the cost per test decreases.

Coexistence – Manual and Automated Testing in the Same Test Plan

This might be a good time to add automated testing to your test plan. The first step in this direction is realizing that no test plan can be executed completely with automated methods. The challenge is determining which components of the test plan belong in the manual test bucket and which components are better served in the automated bucket.

This is about setting realistic expectations. I will say it: automation can’t do it all. Heresy from an automation expert! But I’ve said it and I believe it. You should not automate everything. I will also say that humans are smarter than machines (forgive me, oh robotic overlords).

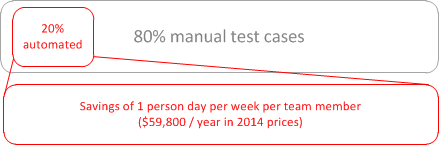

Let’s begin by setting the expectations at a reasonable level. Let’s say we’ll automate 20% of test cases. Too small, management cries! Let’s address those concerns by describing what automating 20% means.

How to Choose Elements of the Test Plan to Automate

So we have decided to automate 20% of our test cases, great! There is only one problem - 20% means 20% of your test cases. But which 20% you may ask?

How about the 20% of test cases used most often, that have the most impact on customer satisfaction, and that chew up around 70% of the test team’s time? The 20% of test cases that will reduce overall test time by the greatest factor, freeing the team for other tasks. That might be a good place to start.

These are the test cases that you dedicate many hours performing, every day, every release, every build. These are the test cases that you dread. It is like slamming your head into a brick wall – the outcome never seems to change. It is monotonous, it is boring, but, yes, it is very necessary. These test cases are critical because most of your clients use these paths to successfully complete tasks. Therefore these are the tasks that pay the company and the test team to exist. These test cases are tedious but important.

What 20% Test Automation Can Mean to Test Success

I would like to refine the “automate 20% of test cases” concept. And redefine it.

Let’s consider what that 20% represents in relation to two issues: the cost of test (primarily personnel) and ability to meet predefined test schedules.

What can your test team accomplish in a 40 hour workweek (who has those anymore)? With even greater granularity, what can your test team accomplish in a workday of 8 hours? What is the impact realized by automating a set of test cases that reduces test time: complete this set of test cases in 4 workdays instead of 5 (since 20% of your test cases are now automated).

If the average tester’s salary is $60,000 per year, the cost of an 8 hour day is about $230. Using an automation tool over a year could save 52 8 hour days for one tester – the equivalent of $11,960 in salary. A team of 5 increasing productivity at this rate saves $59,800 annually. By automating at this minimal level you’re achieving the same results as you would by adding 1 additional tester to your staff

This doesn’t mean you need 1 less tester on staff. This means that you now have the equivalent resources of 1 extra team member to test deeper – and more often – those test cases that cannot be tested with automation. For example, an application process may require direct manual intervention such as confirming that the receipt printed is accurate or that a physical process (such as filling a car’s gas tank) has completed. There are also software applications which are resistant to automation. These include applications which include fully dynamic, uncontrolled feed displays.

You now have additional resources to find bugs or defects that would normally be found by your customers after deployment, when the cost to correct application deficiencies is the highest and the damage to customer satisfaction and your brand image is greatest.

You can also keep pace with iterative builds as a result of any continuous improvement software development method used, such as Agile, Kanban or Scrum. No matter how well planned, application development and test timelines always change. And the compression most often impacts testing timelines the most. The ability to execute the most critical, repetitive, and time-consuming test cases quickly will keep your test team ahead, reaching test goals and ultimately time-to-market deadlines with ease.

Compound Benefits

Let’s circle back to the 20% of test cases we identified at the beginning. Remember these repetitive, mind-numbing, yet critical test cases? You’ll also remember that while these test cases represent 20% of the total number of test cases, they also represent a much larger block of test time to execute and document – 70% of test time in this example (your mileage may vary). What this means to you is that the actual impact of adding automated testing to your overall plan will be greater than the example provided.

So What Tools Should I Use?

Obviously the first answer is to choose a tool that can automate the specific technology you’re testing, otherwise your automation is doomed to fail. Secondly you should choose a tool that has some of the following characteristics:

- Offers a codeless / scriptless / no-code option for your functional experts to write basic tests without needing an experienced automation engineer for all tasks.

- Good IDE that makes it easy for your automation engineers to write more advanced tests, make changes, find issues and be able to deploy the tests on all the environments you need to test

- A tool that is well supported by the manufacturer and is keeping up to date with new web browsers, operating systems and technologies that you will need to test in the future. Just because you used to write your application on Windows 3.1 using Delphi doesn’t mean it will be that way forever!

- A object abstraction layer so that your test analysts can write the tests in the way most natural for them and your automation engineers can create objects that point to physical items in the application that will be robust and not change every time you resort a grid or add data to the system.

- Support for data-driven testing since as we have discussed, one of the big benefits of automation is the ability to run the same test thousands of times with different sets of data.

- Advanced machine-learning features such as self-healing tests that make your tests more resilient and reliable.

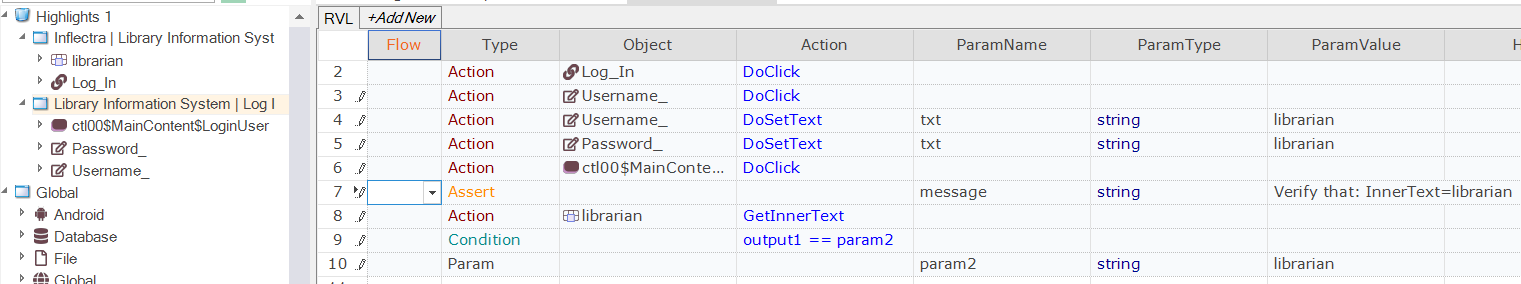

Rapise from Inflectra is a great tool that meets these criteria, is very easy to use, covers a wide range of technologies (web, desktop, mobile and APIs) and is relatively affordable:

However whichever tool(s) you choose to use, make sure that your testers can try them out for at least 30 days on a real project to make sure they will work for your team and the applications you are testing.

Change Is Good!

So now you have justifications to address challenges to including automation in your test plan, such as:

- The team knows the application and can run through it in a few days to see if there are any defects. But is the test team only testing at the top level or in-depth? Is the test team keeping pace with daily builds and meeting release deadlines?

- The automation tools can’t be as smart as my team. That’s true. Use your test team’s skills to dig deeper into areas you might not regularly test. Providing a stable, high-performance application is the best way to maintain customer satisfaction and market share.

- The automation tools cannot deal with the complexity of my application. But automation can deal with the repetitive, mundane aspects of the application, freeing your test team to concentrate on the complex issues in which they are experts.

So what about Manual Testing?

For the remaining 70-80% of your tests that will need to performed manually, how can you reduce the time it takes to write and execute these tests? Well there are some options that can make this easier.

Firstly instead of having to manually write test cases in MS-Excel or MS-Word manually step by step, you can use technology and the power of automation to help you. The same Rapise tool (from Inflectra) that can do test automation also has a neat features for speeding up the creation of manual test cases:

Rapise will sit in the background as you simply use the application to be tested, and will monitor your clicks, keystokes and other actions. It will then use that to build automatically a test case with detailed steps and screenshots that your testers can playback manually. Of course a tool will never write a perfect test case, so you will need to make some edits and adjustments, but it will save 95% of the time taken to write a test case. In the example above, it took about 1 minute to create that test case instead of the normal 15-20 minutes to do it by hand.

Similarly, playback of manual tests can often be a cumbersome and tedious process, following steps, capturing screenshots, annotating them so that they will make sense to a developer the following day. Rapise also includes a manual playback option with built-in screen capture and annotation that makes this easy as well:

It’s Never Too Soon to Start

Fill a pail with seawater, take a bite of the elephant, take a step up the mountain. You’ve set an achievable goal, a goal with significant impact on your application, your time-to- market, and your company’s bottom-line. And just think: you can add additional automated test cases for your application’s next release. What happens if you automate 30% of test cases? It’s ALL good!