Automated Software Testing: Benefits, Types, & More

What is Automation Testing?

Automated software testing is the ability to evaluate and analyze your applications directly without human intervention. Generally, test automation involves the testing tool sending data to the application being tested, and then comparing the results with those that were expected when the test was created.

Automating your testing allows you to accomplish more testing faster and more efficiently. The average test plan for a commercial-grade application will have between 2,000 and 10,000 test cases. Without automation, your test team of five must manually execute and document results for between 400 and 2,000 test cases. To further complicate things, the scheduled release date of your product is fast approaching.

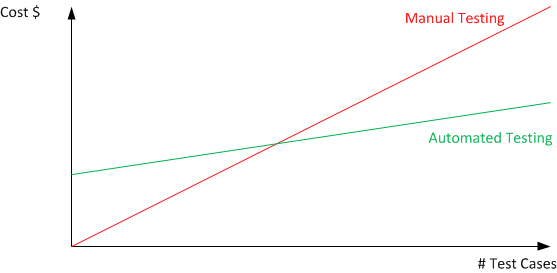

As the graph above illustrates, there is an upfront cost to automated testing (as opposed to purely manual testing), but as the number of test cases and builds increases, the cost per test decreases.

Automation Testing vs. Manual Testing

The primary difference between automation testing and manual testing is that with manual testing, a human is responsible for testing the functionality of the software in the way a user would. Automation testing is done so more time can be spent on higher-value tasks, such as exploratory tests, while automating time-consuming tests, such as regression tests. With automated testing, time is still spent maintaining test scripts, but this will increase test coverage and scalability compared to manual testing.

Benefits of Automation Testing

Automation has many advantages and benefits beyond just cost that might be appealing to your organization. Some of the major ones include:

- Improved Scalability - Automating your tests transforms the scale at which your test team operates because computers can run tests 24 hours a day. This allows you to run a lot more tests with the same resources.

- Streamlined Releases - The traditional approach to software development sees all testing done after the product is developed. But if you use test automation, you can constantly re-test your application during development. This helps your release process become more efficient and allows you to catch bugs and issues earlier in development.

- Delivery Speed - Automated software testing makes easy work of repetitive, time-consuming, and manual testing. It further reduces the overall testing effort by increasing the speed and accuracy of each test case.

Misconceptions About Automated Testing

Despite the proven benefits of automated testing, there are common misconceptions that sometimes hinder its adoption. Addressing these myths is crucial for fostering a clear understanding of automated testing and its role in software development.

- Automated Testing Eliminates the Need for Manual Testing

- Reality: Automated testing and manual testing are complementary, not mutually exclusive. While automated testing excels in repetitive and regression testing, manual testing remains essential for exploratory testing, usability assessments, and scenarios that require a human’s intuition and experience.

- Automated Testing is Exclusively for Large Projects

- Reality: Automated testing scales well for large projects, but its benefits extend to projects of all sizes. Even smaller projects can gain efficiency, faster feedback, and improved quality by implementing automated testing for critical functionalities.

- Automated Testing is Too Expensive and Time-Consuming to Implement

- Reality: While there is an initial investment in setting up automated testing, the long-term benefits outweigh the costs. Automated tests save time in the long run by quickly executing repetitive tests, identifying issues early in the development cycle, and reducing the overall cost of bug fixes.

- Automated Testing is Only for Developers

- Reality: Automated testing tools are designed for collaboration among developers, testers, and other stakeholders. Testers with little or no coding experience can create and execute automated tests using user-friendly tools, promoting a collaborative approach to testing.

- Automated Tests Guarantee Perfect Software

- Reality: Automated tests are only as good as their design. While they catch many defects, they might miss certain types of issues. Regular maintenance and updates to automated test scripts are necessary to keep up with evolving software and requirements.

- Automated Testing is All About Regression Testing

- Reality: While regression testing is a primary use case for automated testing, it can be applied to various testing types, including functional, performance, and load testing. Automated testing tools offer versatility, enabling teams to address different testing needs efficiently.

Automated Testing Types

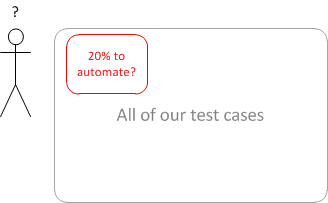

This might be a good time to add automated testing to your test plan. The first step in this direction is realizing that no test plan can be executed completely with automated methods. The challenge is determining which components of the test plan belong in the manual test bucket and which components are better served in the automated bucket.

This is about setting realistic expectations; automation cannot do it all. You should not automate everything. Humans are smarter than machines (at least currently) and we can see patterns and detect failures intuitively in ways that computers are not able to.

Let’s begin by setting the expectations at a reasonable level. Let’s say we’ll automate 20% of test cases. Too small, management cries! Let’s address those concerns by describing what automating 20% means.

How about the 20% of test cases used most often, that have the most impact on customer satisfaction, and that chew up around 70% of the test team’s time? Think about the 20% of test cases that will reduce overall test time by the greatest factor, freeing the team up for other tasks.

These are the test cases that you dedicate many hours to performing, every day, every release, and every build. These are the test cases that you dread. It is like slamming your head into a brick wall – the outcome never seems to change. They’re monotonous and boring, but yes, very necessary. These test cases are critical because most clients use these paths to complete tasks successfully. Therefore, these are the tasks that pay the company and the test team to exist. These test cases are tedious but important, which makes them great candidates for automation.

Selecting the appropriate testing approach is also crucial for a comprehensive and effective quality assurance strategy. Here's a guide to help determine which types of tests are best suited for automation:

- Why Automate: Unit tests, which validate individual units or components of code, are foundational for ensuring the correctness of small code segments. Automated unit tests offer quick feedback during development.

- Repetitive Regression Testing

- Why Automate: Automated tests excel at repetitively verifying that new code changes haven't adversely affected existing functionalities, ensuring stability during frequent code updates.

- Data-Driven Testing

- Why Automate: When the same test logic needs to be applied with multiple sets of data, automated testing can efficiently iterate through various scenarios, reducing time and effort.

- Large-Scale Regression Suites

- Why Automate: For projects with extensive regression suites, automated testing provides the speed needed to execute a large number of tests in a timely manner, ensuring thorough coverage.

- Why Automate: Automated tests are effective for validating API endpoints, data formats, and response codes, especially in scenarios involving frequent interactions with APIs.

- Cross-Browser Testing

- Why Automate: Automated testing ensures consistent verification of web applications across various browsers and platforms, helping identify and address compatibility issues.

- Integration Testing

- Why Automate: Integration tests verify the interactions between different components or systems. Automated integration tests ensure the smooth collaboration of these components, detecting integration issues early in the development process.

- Performance and Load Testing

- Why Automate: Simulating thousands of concurrent users or stress-testing a system's performance is best achieved through automation, which can handle the complexity and scale required.

Which Types Should be Tested Manually?

On the other hand, some testing types are more effectively handled by manual, human testing:

- Exploratory Testing

- Why Manual: Exploratory testing, characterized by dynamic and unscripted exploration, relies on the intuition and creativity of human testers to uncover unexpected issues.

- Usability/UI Testing

- Why Manual: Evaluating the user-friendliness and overall user experience often requires a human tester to assess subjective aspects, such as interface intuitiveness and design aesthetics.

- Ad-Hoc Testing

- Why Manual: Unstructured testing, performed on an impromptu basis, is best suited for manual testing when specific scenarios or edge cases need exploration.

- User Acceptance Testing (UAT)

- Why Manual: User Acceptance Testing (UAT) involves end-users validating the software against their real-world requirements. Human testers can provide valuable feedback based on their domain knowledge and experience.

- Early-Stage Testing

- Why Manual: In the initial stages of development, when requirements are evolving rapidly, manual testing allows for quick adaptability and validation without the need for extensive scripted tests.

Automated Testing Process

Selecting Automated Testing Tools

- Objective: Choose appropriate tools that align with project requirements and support the application's technology stack.

- Activities:

- Evaluate various automated testing tools based on features, compatibility, and ease of use.

- Select tools that offer support for the application's programming language, frameworks, and integration capabilities.

Define Scope of Automation

- Objective: Clearly define the scope, objectives, and test strategy for automation. Identify test cases that provide maximum coverage and benefit from automation.

- Activities:

- Assess the application and determine suitable test scenarios for automation.

- Define the testing goals, timelines, and success criteria.

- Prioritize test cases based on critical functionalities and regression requirements.

Planning and Development

- Objective: Establish a stable and consistent testing environment to ensure the repeatability of automated tests.

- Activities:

- Configure the necessary hardware, software, and network settings for the test environment.

- Translate test cases into automated scripts using the chosen testing tool.

- Implement scripting logic for various test scenarios, including positive and negative test cases.

Test Execution

- Objective: Run automated test scripts to validate the application's functionality, performance, and compatibility.

- Activities:

- Execute automated tests in a controlled test environment.

- Monitor test execution for errors, failures, and exceptions.

- Collect relevant data and logs for analysis.

Results Analysis

- Objective: Analyze test results to identify defects, validate functionality, and assess overall test coverage.

- Activities:

- Examine test reports generated by the automated testing tool.

- Validate whether the application meets specified requirements and performance expectations.

- Log defects with detailed information, including steps to reproduce and expected vs. actual results.

- Prioritize and assign defects to the development team for resolution.

Maintenance and Updates

- Objective: Keep automated test scripts up-to-date to align with changes in the application and its requirements.

- Activities:

- Regularly review and update test scripts to accommodate changes in the application.

- Adjust scripts to reflect modifications in the user interface, functionality, or business rules.

- Ensure compatibility with new software versions and technologies.

Why Should I Use Rapise for My Software Testing?

Not only do you need a reliable test automation tool, you need a test automation tool that can be integrated fully into your development process and adapted to your changing needs:

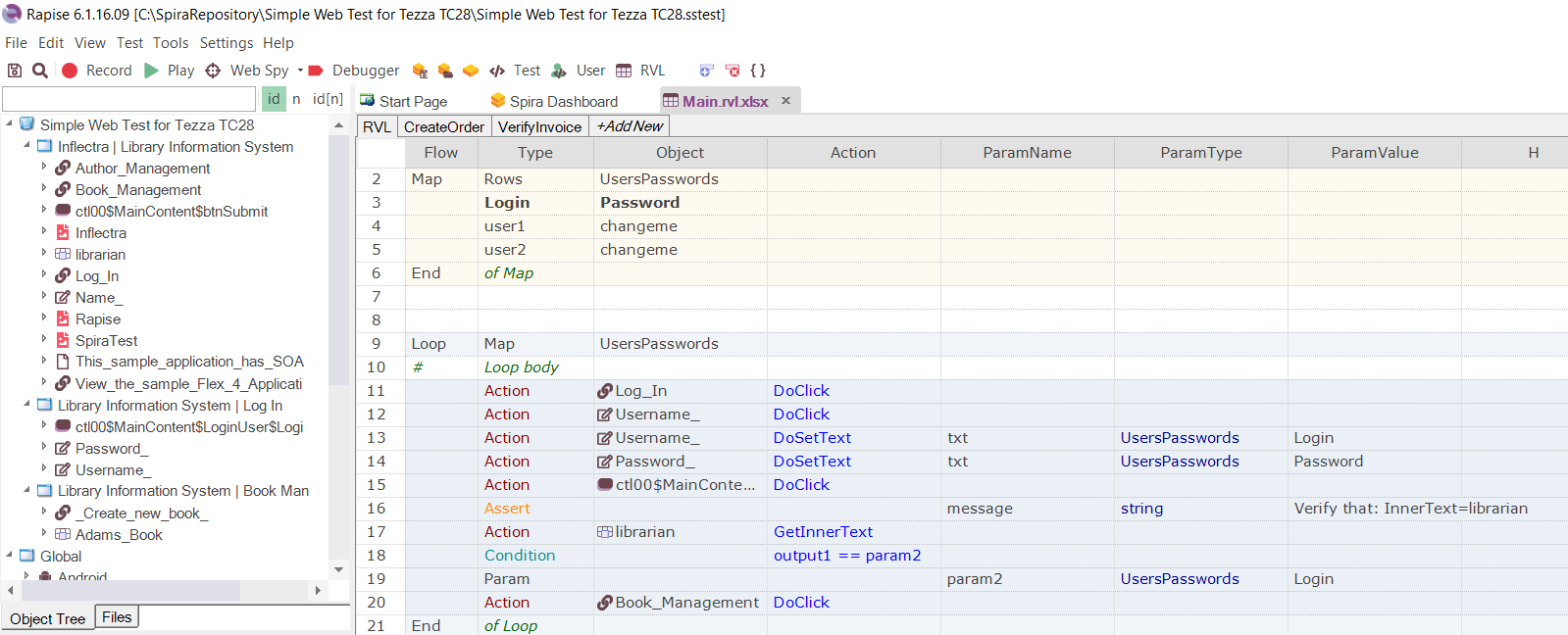

Rapise is the most powerful and flexible automated testing tool on the market. With support for testing desktop, web, and mobile applications, it can be used by testers, developers, and business users to rapidly and easily create automated acceptance tests that integrate with your requirements and other project artifacts in SpiraTeam.

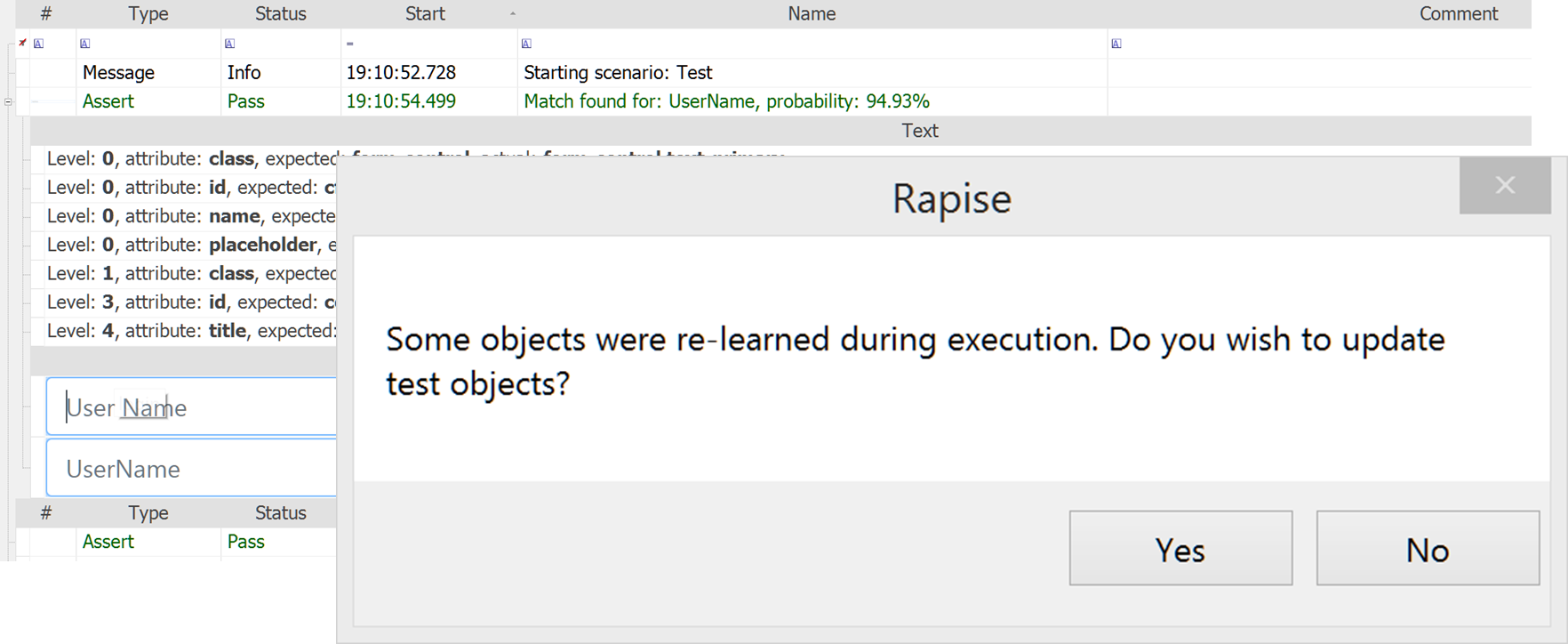

Self-Healing Tests

One of the obstacles to implementing test automation on projects is that the application’s user interface may be changing frequently, with new pushes to production daily. Therefore, it is critical that tests created one day, continue to work seamlessly in the future, even with changes to the system.

Rapise comes with a built-in machine learning engine that records multiple different ways to locate each UI object and measures that against user feedback to determine the probabilistic likelihood of a match. This means that even when you change the UI, Rapise can still execute your tests and determine if there is a failure or not.

Testing Multiple Technologies

Many test automation products are only able to test one type of platform. With Rapise, your teams can learn a single tool and use it to test web, mobile, desktop, and legacy mainframe applications from the same unified tool:

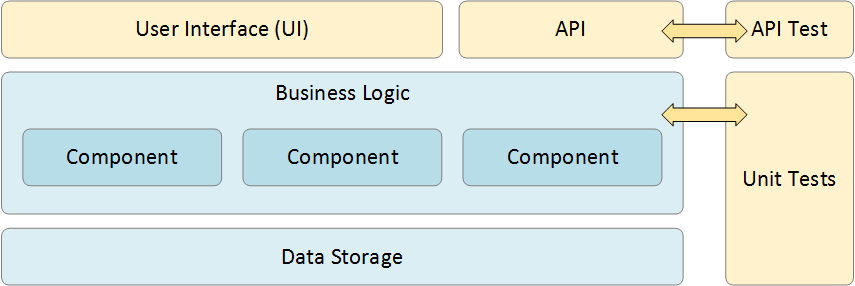

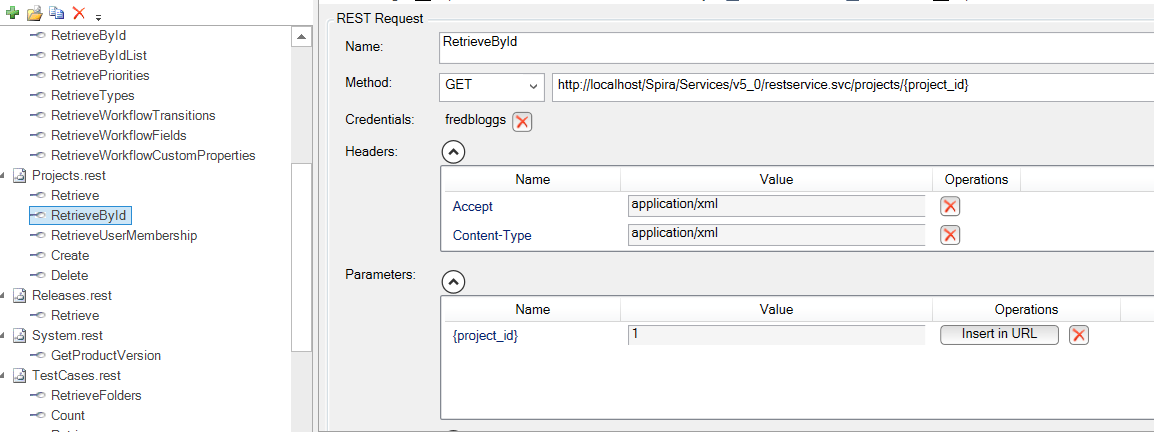

User Interface & API Testing

Rapise is unique in offering both API and UI testing from within the same application. Rapise can handle the testing of both REST and SOAP APIs, with a powerful and easy-to-use web service request editor:

One of the benefits of using Rapise is that you can have an integrated test scenario that combines both API and UI testing in the same script. For example, you may load a list of orders through a REST API, and then have the ability to verify in the UI that the orders grid was correctly populated.

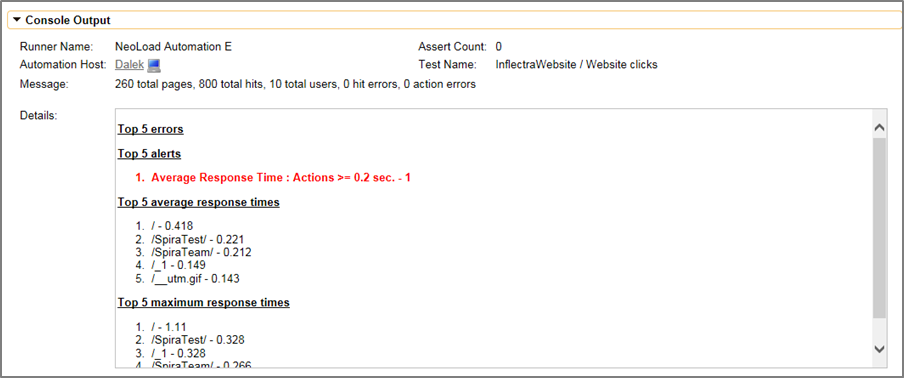

Load/Performance Testing

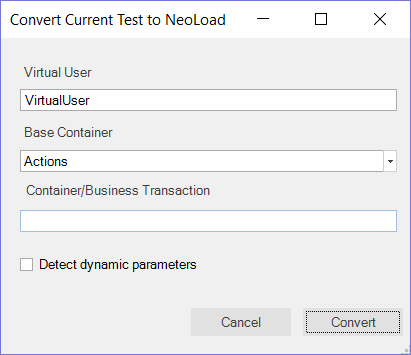

Inflectra has partnered with Neotys, a leader in performance testing and monitoring solutions. Our partnership allows you to seamlessly integrate Rapise and NeoLoad to get an integrated function and performance testing solution. It also allows you to take an existing test script written in Rapise and convert it seamlessly into a performance scenario in the NeoLoad load testing system.

This feature allows you to convert Rapise tests for HTTP/HTTPS-based applications into protocol-based NeoLoad scripts that can be executed by a large number of virtual users (VUs) that simulate a load on the application being tested.

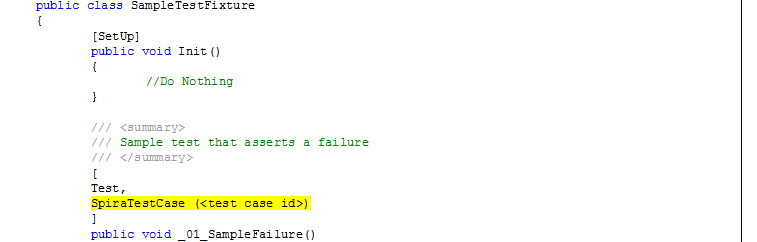

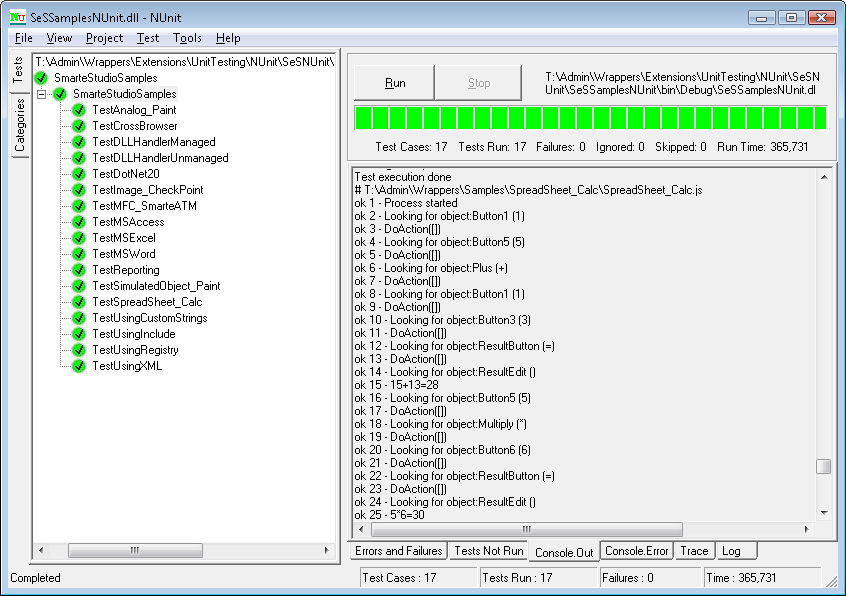

Unit Testing

Rapise also comes with a special extension for NUnit and Visual Studio’s MS-Test that facilitates the calling of Rapise tests from within unit test fixtures.

In addition, Rapise includes pre-built Visual Studio templates for NUnit and MS-Test that allows you to quickly and easily write GUI-based unit test scripts in a fraction of the time it would otherwise take.

How do I Get Started?

To learn more about Rapise and how it can help you get started with automated software testing you can: