Abstract

While it might seem that the most important consideration when choosing a test management software tool is the set of basic tool features supporting the test process itself, you should not neglect a wide range of other questions that could make or break your test management tool choice.

These include, but are not limited to support for: metrics, defect reporting, test automation, release management, requirements integration, change notification, as well as hosting options and a number of other technical issues such as APIs and user management. Along with other specific test management features themselves, these issues shall be addressed in this paper.

Introduction

Whether you are choosing a solution for a traditional phased development project or an Agile, iterative-type development (e.g. Scrum, XP, Kanban), the purpose of testing is fundamentally the same: to ensure the software does what it is supposed to do and that it does not do things that were unintended. This requires two types of testing; verification that the requirements have been met, and verification that they have not been exceeded in undesirable ways. For example, web-site user authentication that correctly requires 100% foolproof user identification would nonetheless be considered faulty if it proceeds to provide typing lessons for those who enter their name incorrectly (presumably the requirements would not explicitly say the system should not do this.)

Not only should you be testing against the requirements, you must be testing for the unexpected. The former demands traceability between the tests and the requirements while the latter, although seemingly paradoxical, needs some flexibility to allow test engineers to go outside the box.

As well as links to requirements, test management tools are most powerful when they have some operability with test automation and defect management. But before you begin to examine tool capabilities, you must look inwards to understand your own project needs.

Understanding Your Needs

Do you have a clear understanding of what you are looking for? If you are not sure, you're not ready to review the options. It can be an educational experience to see what vendors in the marketplace are offering, but if your project needs are unclear, the multitude of features on offer may simply add to the disarray. Don't put the cart before the horse by looking at tools and then deciding whether you need the offered functionality. If you don't know where you are going to use your smartphone, can you really decide what type of service to choose?

While it is true that you can't really be sure of the long-term direction of your organization, planning for all eventualities will lead to the perceived need for every feature possible. At the same time, you need a product that will be a real benefit over the long haul. It's a fine line to walk, but in reality, you want to keep your needs simple and avoid an over-engineered solution that will be expensive and more difficult to deploy. Don't be encouraged by the vendor to add needs to your list; if you are looking to buy a small family car, don’t leave the showroom with a large SUV.

It should be said that this paper does not advocate any specific test method as better or worse than any other; that is a subject for another day. What we shall try to offer here is a way to look at test management features and how they might support various test methods or aspects of testing. Whether those features apply to your project or are more or less important, well only you can decide.

One final thought before diving into the considerations for test tool selection: getting started. Once you have selected a tool, hopefully you will quickly be able to start using it. But don’t forget the issues related to the initial startup period. How many users are you going to need to train? How easy is the tool to use? Does the tool require that you spend a period of time defining your test processes, the test data makeup and what you want your dashboard to look like? If you’re anything like the majority or people, you want to start using your new smart TV right away. You’re unlikely to follow the ‘getting started’ guide let alone read the user manual. How quickly will your test management tool be effective? Don’t make a choice that adds so much effort up front that your project begins with a delay.

Take away: Understand your needs before examining tools.

Tool Selections

When it comes to test management support tools there are a dazzling variety of options to choose from. What makes this worse is that some vendors themselves offer multiple products and they do this in such a way that makes the selection process more complicated than it needs to be. Take HP for example. While HP’s software support tools are generally highly regarded, you are presented with HP Quality Center, HP Application Lifecycle Management, HP QuickTest Professional, HP Service Test and a combination of the last two into something called HP Unified Functional Testing Software, and this list doesn’t even include HP’s Agile project support which is found in a different family of tools altogether!

All these tools provide testing support in one form or another, and that begs the question, ‘why does it have to be so complicated?’ HP is not the only perpetrator here, IBM is not much better. With Rational Quality Manager, Rational System Tester, Rational Test RealTime, Rational TTCN Suite, not to mention the test support capabilities of Rational DOORS, IBM offers a bewildering choice for test support, and that’s just within the IBM Rational line.

No doubt all these products offer some benefit, but why is the functionality so fragmented? Is it so that you, as the consumer, have to spend more money? Or is it simply badly organized product strategies brought on by a series of separate tool acquisitions? Perhaps each tool is designed for slightly different needs, but should you really be required to buy them all and then frequently jump between different tools?

Software support tools are supposed to make your job easier, but choosing and managing software support tools for an organization, or even a single project, has become a full-time job in itself, adding further to the startup cost of tool ownership. Software tool vendors need to understand that carrying a Swiss Army knife is more appealing to buyers than carrying around 10 individual tools.

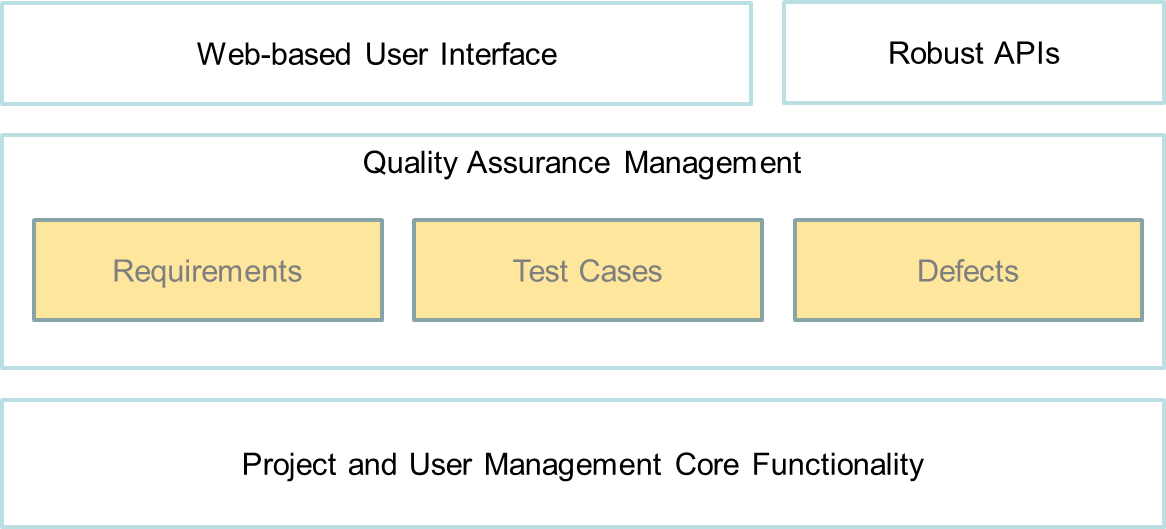

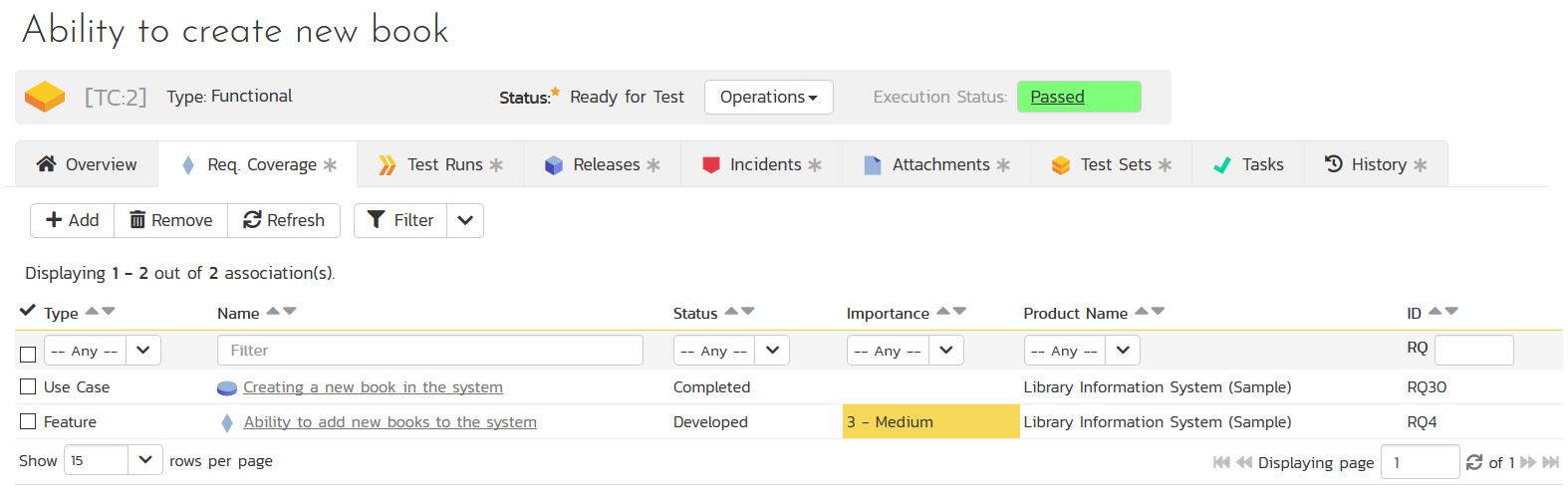

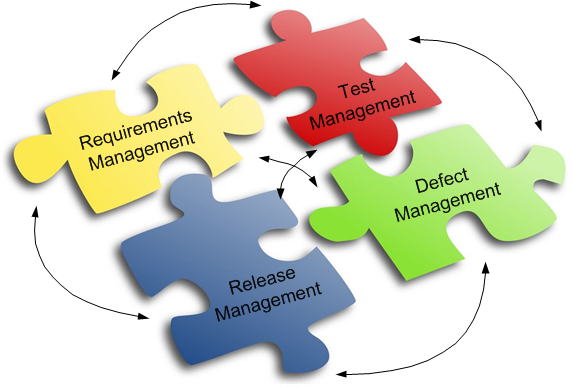

An alternative would be something like Inflectra’s SpiraTest which provides a wide range of testing capability, along with requirements management and defect reporting, together in a single package.

Figure 1 SpiraTeam's Simple Integrated Architecture

The very first consideration is therefore, whether to look for a single solution that offers a wide variety of test support capability, which can be used as and when it is required, or invest in a vendor with a large number of products, mixing, matching and switching as your needs change. Whichever solution you choose, make sure, as we said earlier, that the solution fits your needs and not the other way around.

Before examining features desirable in a test management tool, there are some environmental and technical considerations that can be made which might mean an early elimination from contention for some tools.

Take away: Consider how many tools you are trying to purchase.

Your Project Environment

Before you begin to look at tool features, consider your development environment. If you already use some tools, how are they managed by your IT organization? Is the arrangement satisfactory or would a different approach be better? Are new technologies being mandated for any newly purchased tools? Are your existing products client-server applications or web-based products requiring merely a client-side browser? If so, are all browsers supported by the testing tool?

Is your IT staff able to administer a web server to support the application? Is there a corporate policy towards remotely hosted, Software as a Service (SaaS) solutions? SaaS can save significant effort and the resulting cost savings are important when calculating cost of ownership, which itself is a factor when considering purchase price. However, with the increasing concerns over security (personal data hacking) and privacy (government and private sector snooping) there has been something of a decline in the popularity of remote or third party hosted solutions, something to bear in mind. All these questions are equally important if you are choosing to lease a solution rather than make an outright purchase.

Take away: What is the best tool architecture for your organization and project? Should you buy or lease? Self-host or lease a service?

Technical Issues

Tests data does not do well on its own; it is like a social creature that withers and dies without constant companionship. To cultivate the health of your test cases you need to relate them to requirements, defects and software builds or releases. So, how will you do this with your new test management software? Do you have support desk software that you would like to interface with the test software for combined issue reporting?

You might be lucky enough to find a test tool with a custom built integration with your existing support desk product. But that can narrow your choices considerably. Also, consider the possibility that you might change help desk support tools, or perhaps you have an home-grown report generator which pulls data from various sources to produce consolidated reports? In these cases, and for numerous other reasons of flexibility, you may need your test tool to have an open architecture or a rich API.

There are very few project-based tools these days which do not require some form of user authentication and the best ones allow for authentication against a project or organization level list of users using LDAP or ActiveDirectory. If neither of these is used within your organization, one of them, or something like them, is likely to be introduced at some time in the future. You would do well to plan for that eventuality. Also, to fully support the worldwide testing and use of your products, consider whether a tool will accommodate multiple languages and multiple time zones.

If you need to run tests on mobile devices to test products in the field or simply for the convenience mobile devices offer, look for tools with specific support for touch-screen tablets or even smart phones. Perhaps your project requires an element of testing in an environment where tablets or smart phones are easier to use than laptops or other computers. You may not yet use mobile devices for test management; however, it may be just around the corner. There are not many tools which explicitly support such environments, but computing in general continues to become more mobile, so why shouldn’t test management software?

We shall not go into the details of testing mobile applications. If this is your area of interest, look for test management tools which run on the same mobile devices on which you plan to test. Bear in mind that the operation of the test tool might be more likely to interfere with the operation of the device or app being tested than testing on traditional platforms.

Take away: Do custom integrations exist; do your preferred tools have an Open Architecture/API; is there support for corporate level user authentication; do you need mobile access to your test tool?

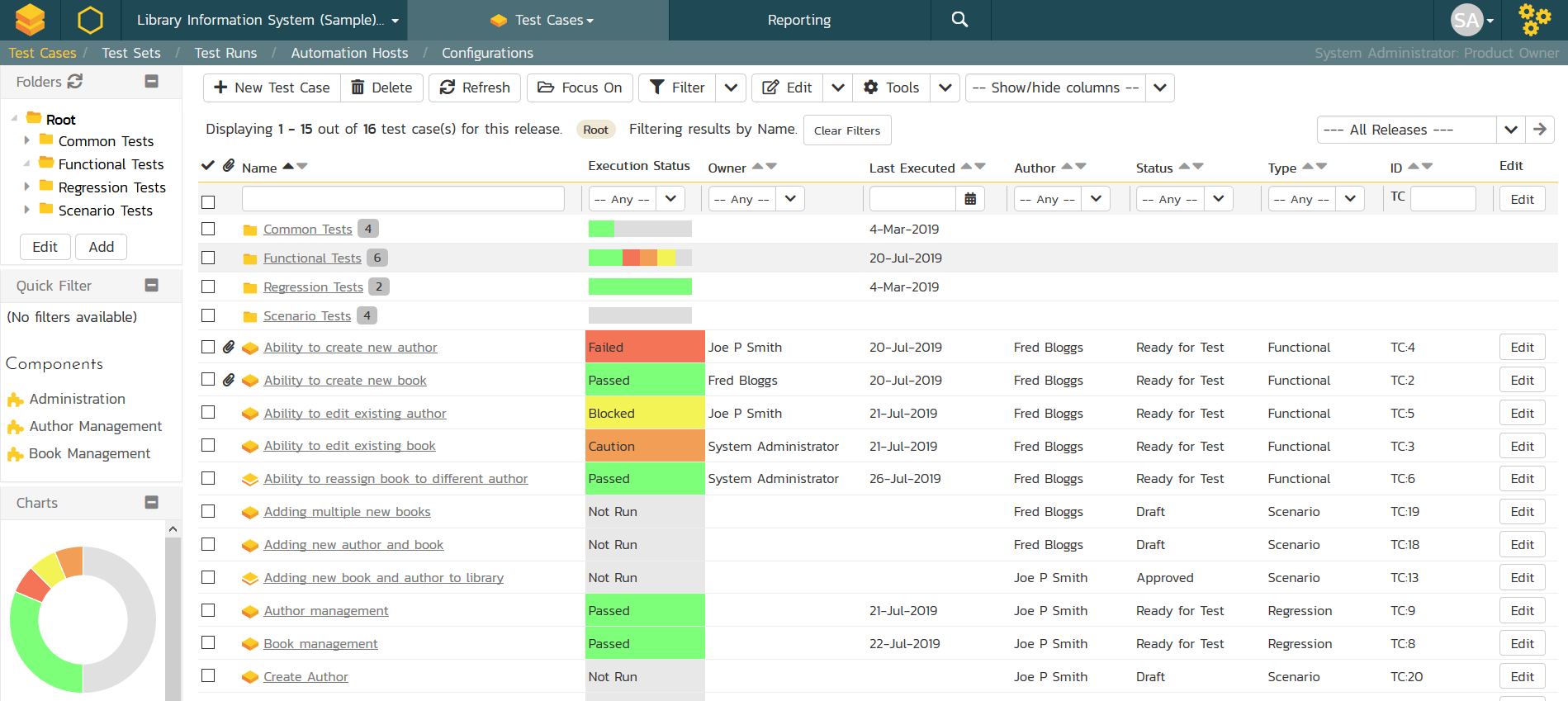

Test Management Features

The list of features offered by most test management tools is sufficiently extensive that it can take several pages just to summarize, especially if the features are specified in detail. While it would be possible to spend time addressing needs such as the ability to specify the individual steps of a test case, or searching for failed tests, or simply recording observations during a test, we must assume all tools provide some basic capability otherwise we could spend too much time on excruciating detail. Vendors such as Inflectra, offer simple comparative lists of features across a number of industry tools, therefore we shall concentrate here on less common capabilities which can, nevertheless, make a big difference in the success or failure of your tool choice.

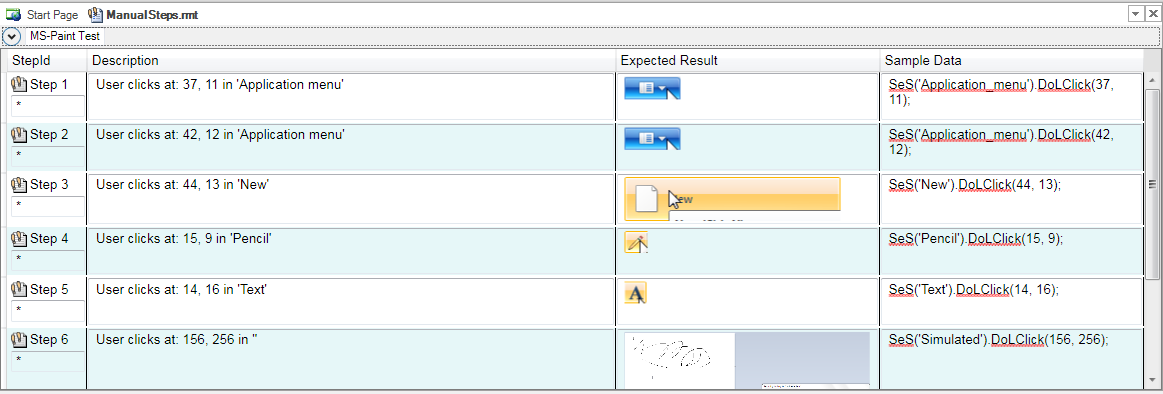

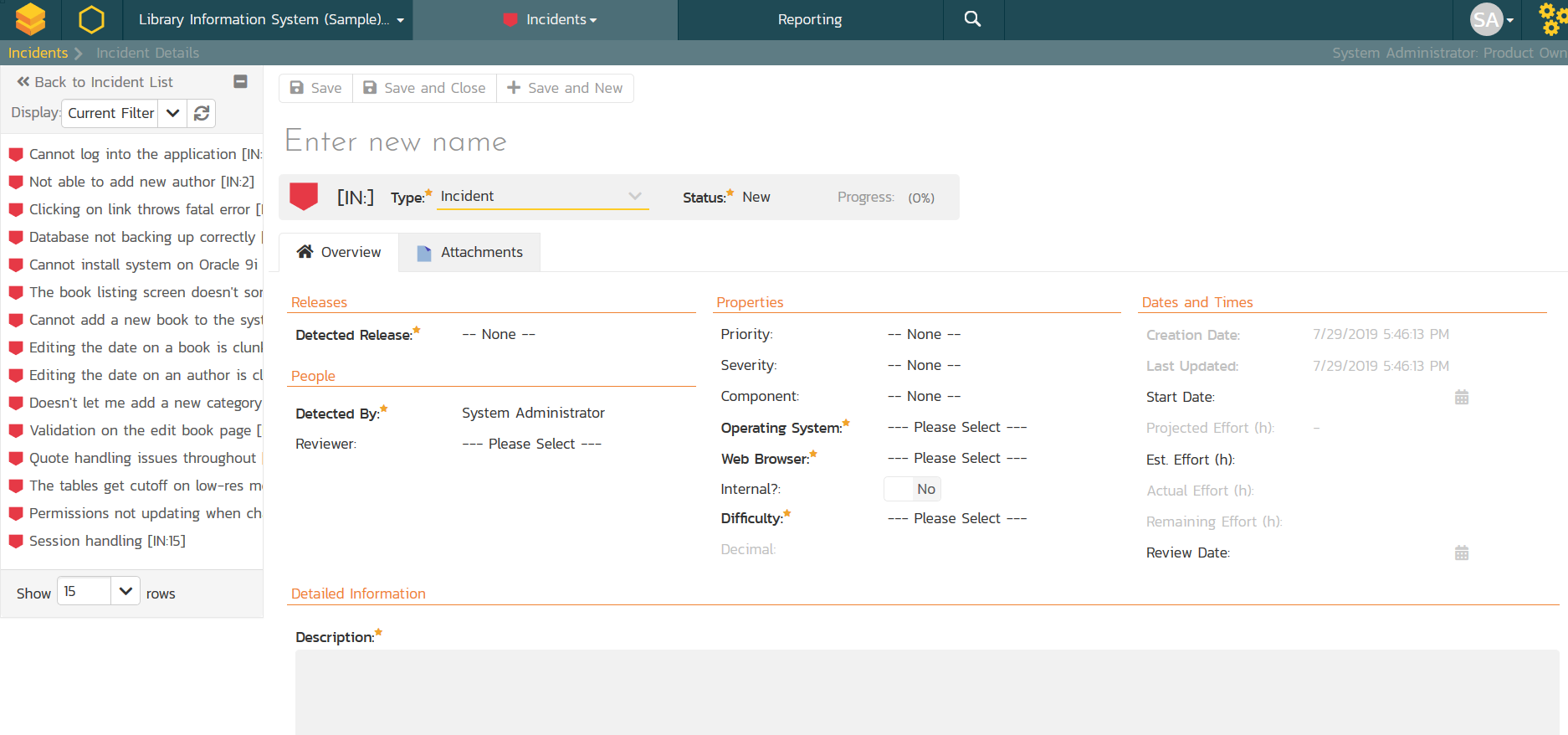

Test Step Granularity

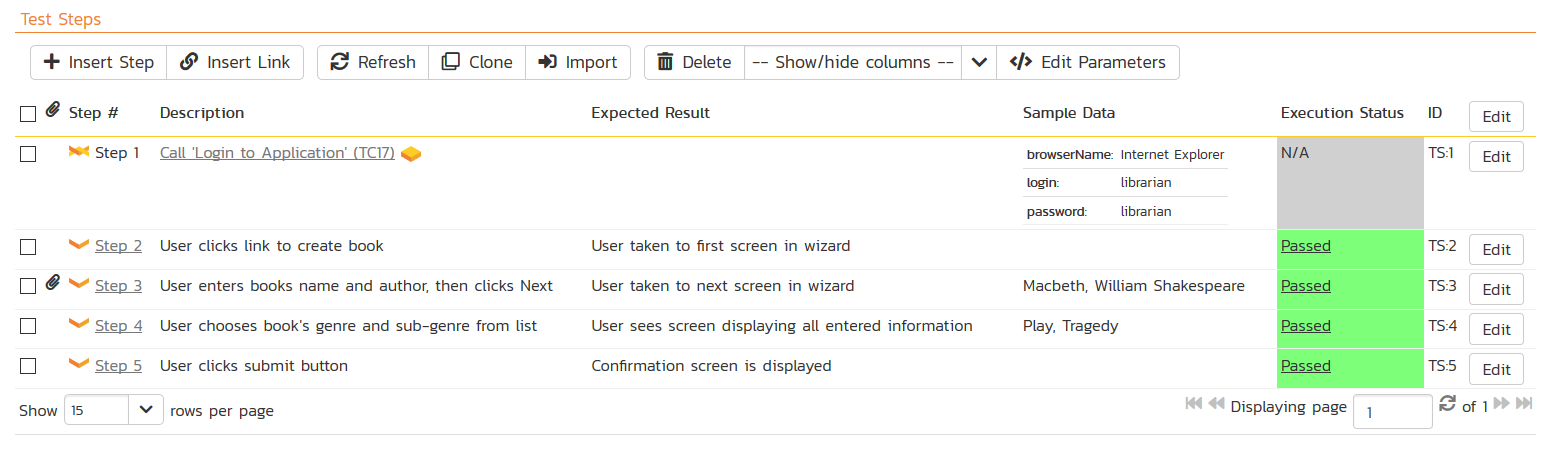

Any decent test management tool will provide a range of information for each test case such as sample data to be used in the test, comment space for the tester and, of course, expected test results. But not all tools provide the same information for each test step!

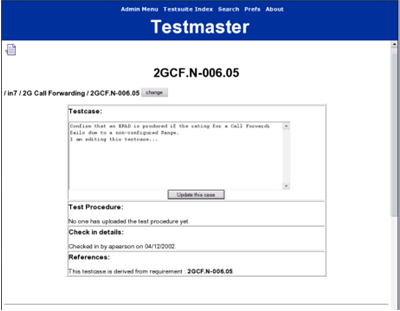

Figure 2 Example of Simple Test Case

But if you think about it, it’s almost essential. How should a set of input data be specified for use over a series of test steps when each step might require more input data? Without the ability to provide data at the step level you will be forced to work hard to make it clear how the sample data as a whole should be used in each step. Would it not also be useful to see exactly how the system should respond at each step, rather than for the test case as a whole? Without this level of granularity, it becomes difficult to make it clear what happened and when, in the case of test failure. It is always helpful to know at which step the test failed rather than the fact that the test case failed as a whole. They say the devil is in the details and the purpose of testing is to find the devil so that it can be exorcised. Detailed information will help you work smarter, not harder.

Figure 3 Example of Detailed Test Steps

Take away: Examine the granularity of test information.

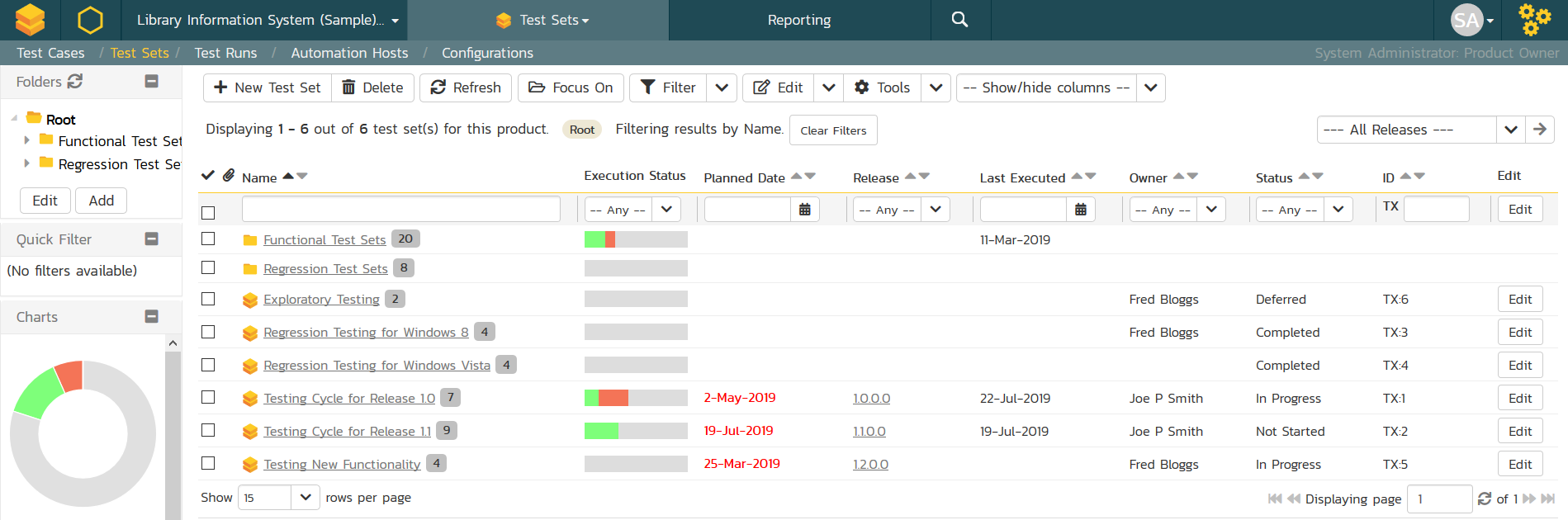

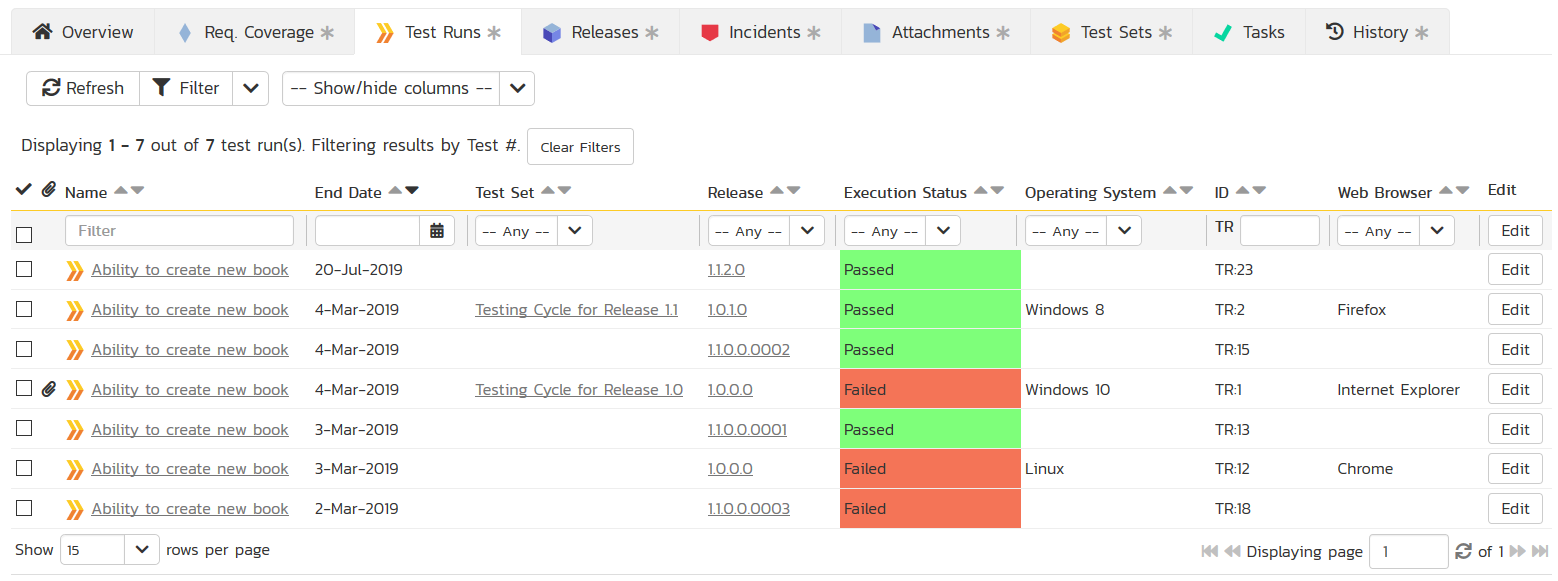

Test Runs & Work Packages

Unlike requirements, which represent (desired) results, test cases represent tasks - tasks that are going to be repeated. As such, they can be allocated directly to individuals for execution, (the tests, not the individuals.) If you can group a number of tests together, then you can create convenient work packages. If these work packages can then be allocated to individuals within the test management tool itself, then you can reduce the need for individuals to switch between tools, (e.g. a project management tool and the test tool) to see what must be done, before actually doing it.

It may also be helpful to group test cases for other reasons, for example, a group of tests to represent regulatory or legal concerns which may need to be tested under different conditions than the other tests. It sometimes becomes necessary to create an ad-hoc group of tests, perhaps to quickly check the success or failure of a specific change in implementation.

Using a test management tool that supports the creation, allocation and flexible modification of test execution packages makes it easier for test engineers and project managers alike to visualize and manage entire testing process.

Figure 4 Granularity Increases Insight

Just like the earth, most data structures are not flat. You could create a series of test cases that is one long list, from A to Z, but not only would that be boring, it would be unlikely to represent the reality of your testing needs. The reason testing is a complex problem, is that the software products or systems being tested are themselves, complex. A flat list of tests is not going to be enough. Creating a hierarchy of test cases makes much more sense, but it’s more than simply grouping test cases together into larger packages.

What would really be nice would be for a test case to be useable in place of a single test step in some other test case. Not only is the earth round (sort of) but it’s covered in bumps, dips, flat stretches and unexpected turns. Software is too. Find a test management tool that provides flexible test cases, test steps and combinations of the two to accommodate complexity and variation in the texture of your software products.

How many times do you find yourself in the middle of something serious when the phone rings? You’d like to finish the job in hand but you can see from caller id that it’s the boss and he doesn’t like to be kept waiting. He also talks a lot and usually has some job for you that just ‘cannot wait!’ Ok, so the scenario may not be a common one, but there are all manner of reasons why you often need to stop what you’re doing and come back to it later. This is as true for test runs as it is for anything else. Not only would it be convenient, but it would save time and money, reduce frustration and preserve your relationship with the boss, if your test management tool would allow you to pause a test run and come back to it later. The DVR, (or VCR for anyone nostalgic) has a pause button, why doesn’t the test tool? Well, some of them do. Add that to your feature wish list.

Take away: Can you group tests into work packages? Can tests be organized as hierarchies? Can test runs be paused?

Test Case Generation

Wouldn’t it be nice if a tool could write your test cases for you? Then you could go home early! Well, perhaps it is not as simple as that, but nevertheless, it is usually helpful when something can be automated. There are a number of automated test-case generation methods being used today, for example Rapise from Inflectra provides an automated manual test generation mode:

Automated test case generation is outside the scope of this paper, but it is mentioned here so that if it is something you wish to do, you can consider the interface between tools that offer such automation, and your test management tool of choice. We shall look at automatic test case generation from requirements separately.

Take away: Are test automation solutions important to you?

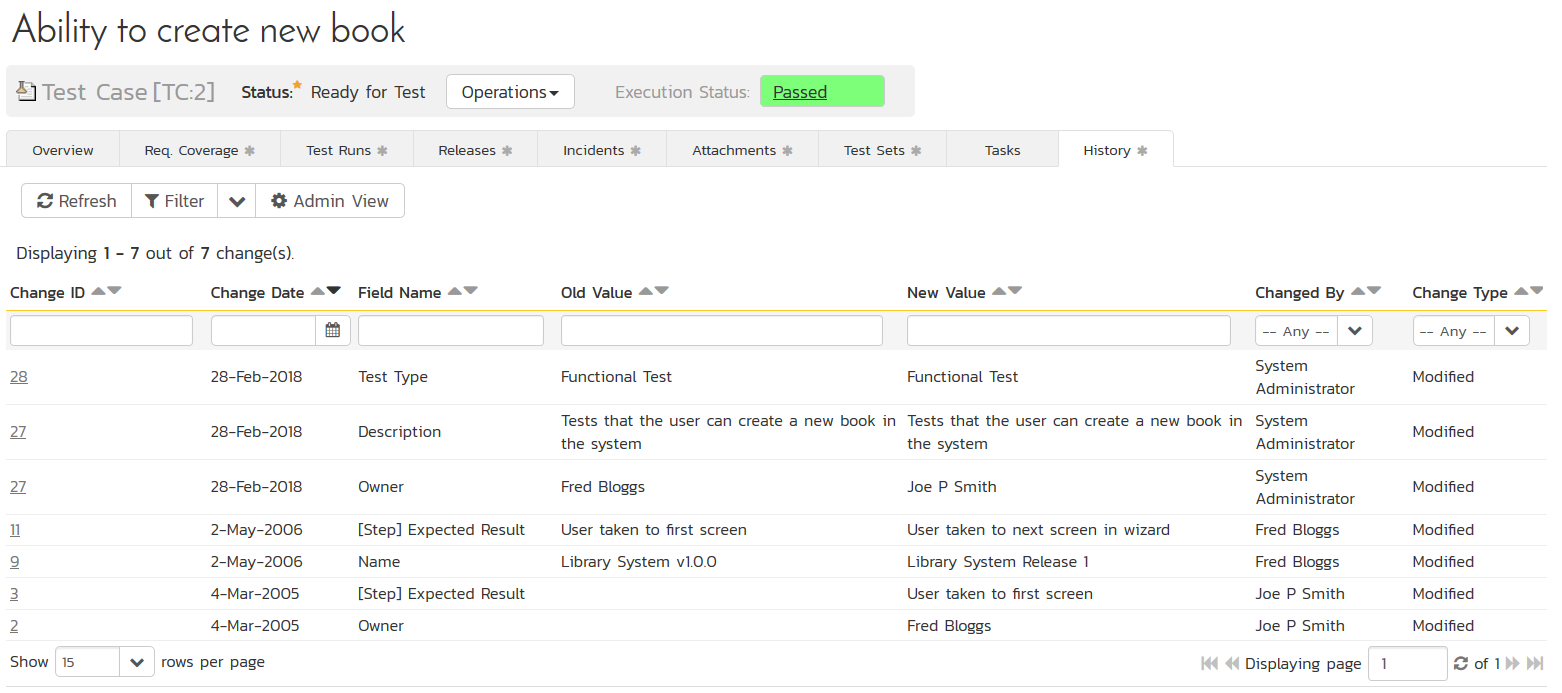

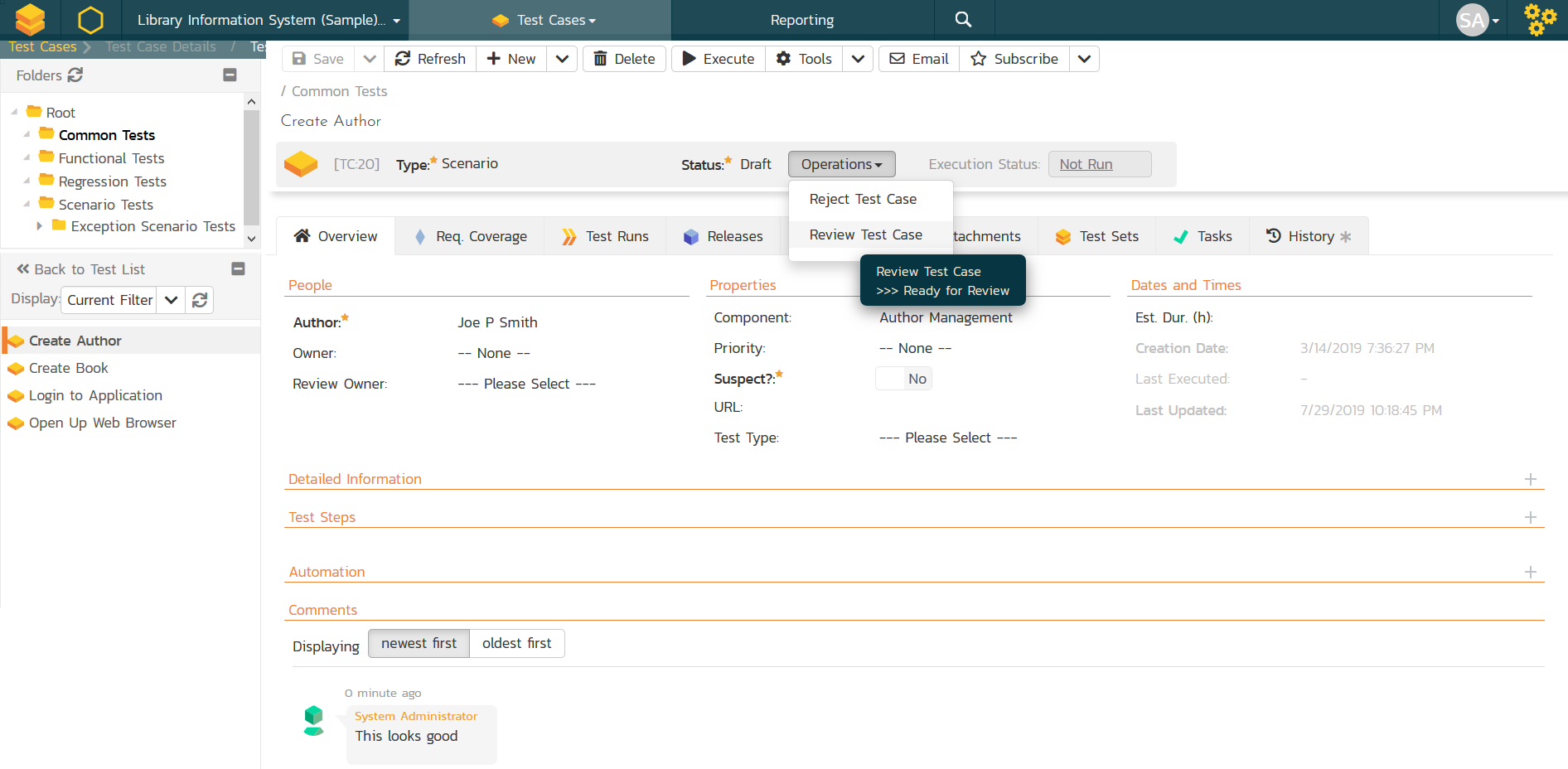

Audit Trails, Change and Versioning

As with any other information, test cases change. While the change may be a direct reflection of other changing information, e.g. requirements or defects, you want a tool that not only manages change but makes it easier to live with. Nobody wants to manage change history or configuration management manually, so the tool had better offer some help. At a minimum, any decent test management tool will keep an audit trail of changes, including who made a change and when. It would be an added bonus if a tool allows the user to say ‘why’ a change is being made. However, tools that make this mandatory are just plain annoying. It doesn’t enforce any kind of accountability as users in a hurry will just enter anything to get past the obligatory entry.

A tool with a little more power might offer full versioning of test cases, or test steps. Not just an audit trail, but a full (or seemingly full) version of the test at each point in time. This ensures the context of the change is more easily understood. There is a chance that when a test changes, so does the sample input data and the expected result. It would be nice to capture the change to all three as a whole.

A full discussion of how to manage and record the changes to links to and from test cases would take up a large number of pages and so we shall not address it in detail here, suffice to say that such changes can be important, so consider each tool’s ability to do so. While this should not be a deal breaker in your choice of tool, ask yourself whether you need to know about relationship changes, and if you do, and how you might go about it.

Also, in the realm of change, consider change control. Is it important to you? Are you under any regulatory or otherwise imposed obligation to do it? Do you care? The simplest means of control is access to the tool or the data. Does the tool offer read-only access to a configurable category of users? Can the tool manage access at lower levels of granularity so that users are able to change the tests that pertain to only them? Even better, can the tool control access to certain parts of a test? This is a very valuable capability, especially for test tools where you want to allow some test engineers to record the results of a test but not change the test description itself. Of course, you don’t like to think of your test engineers as people who would change the test instead of the result to achieve a ‘pass’, but such things happen as a result of the best of intentions. “When I ran the test, it became obvious that the test steps were wrong so rather than waste time talking to other people I changed it. Now the test passes perfectly.” That scenario is actually not as unlikely as you might think.

If a tool is going to go above and beyond the call of duty, it may even provide a change control process where changes can be proposed, reviewed, approved or denied and applied as appropriate. Just be careful not to be attracted by that degree control when applying the idea to an Agile project, it can easily cripple the speed at which you want to work.

One other thing worth mentioning here is change notification. As part of good collaboration, it is important for team members to be kept abreast of changes when either the test cases themselves change, or when the requirements upon which the test are based, change. Test engineers want to find out quickly and easily when the tests they are using maybe out of date. Preferably, the test tool should offer proactive change notification, perhaps an email or a message next time the user starts the test tool. At a minimum, the test engineer should be able to see whether a test case is stale simply by looking at it. Complex queries to make such a determination are quickly going to get forgotten in the heat of the moment and project pressures build.

Take away: Do you need audit trails or versioning? Do you want to control changes to tests? How helpful would automatic notification be?

Collaboration

No man is an island, and test engineers are no exception. While a certain degree of autonomy is healthy, complete separation hurts team dynamics and reduces the exchange of intelligence which teamwork brings. There are a number of ways to help those involved in the test process to share ideas and collectively solve problems, some are a part of, or closely integrated with, the test tool, and others are independent.

The independent options such as email, social media and face-to-face meetings are better than nothing, but require more effort on the part of the participants to reference exactly what is being discussed. Having users jump back and forth between an email trail and the test tool to which it refers, causes frustration in most people working to a deadline.

Collaboration features embedded within the test management tool encourage teamwork and reduce user disenfranchisement. A close integration between a test tool and an email system or a corporate collaboration tool can help greatly, but a better solution is a discussion thread system, either closely integrated with the test tool or better still, as an integral part of it.

Discussion threads are specifically designed for collaborative conversation, email is not. A discussion thread means not having to continually refer to the test in question or refer back to a previous email on the same subject. An added bonus in some tools is that the discussion becomes part of the audit trail or versioning of the tests and so captures the whys and the wherefores of the change.

Take away: Ensure your tool of choice supports electronic collaboration.

Test Results Analysis

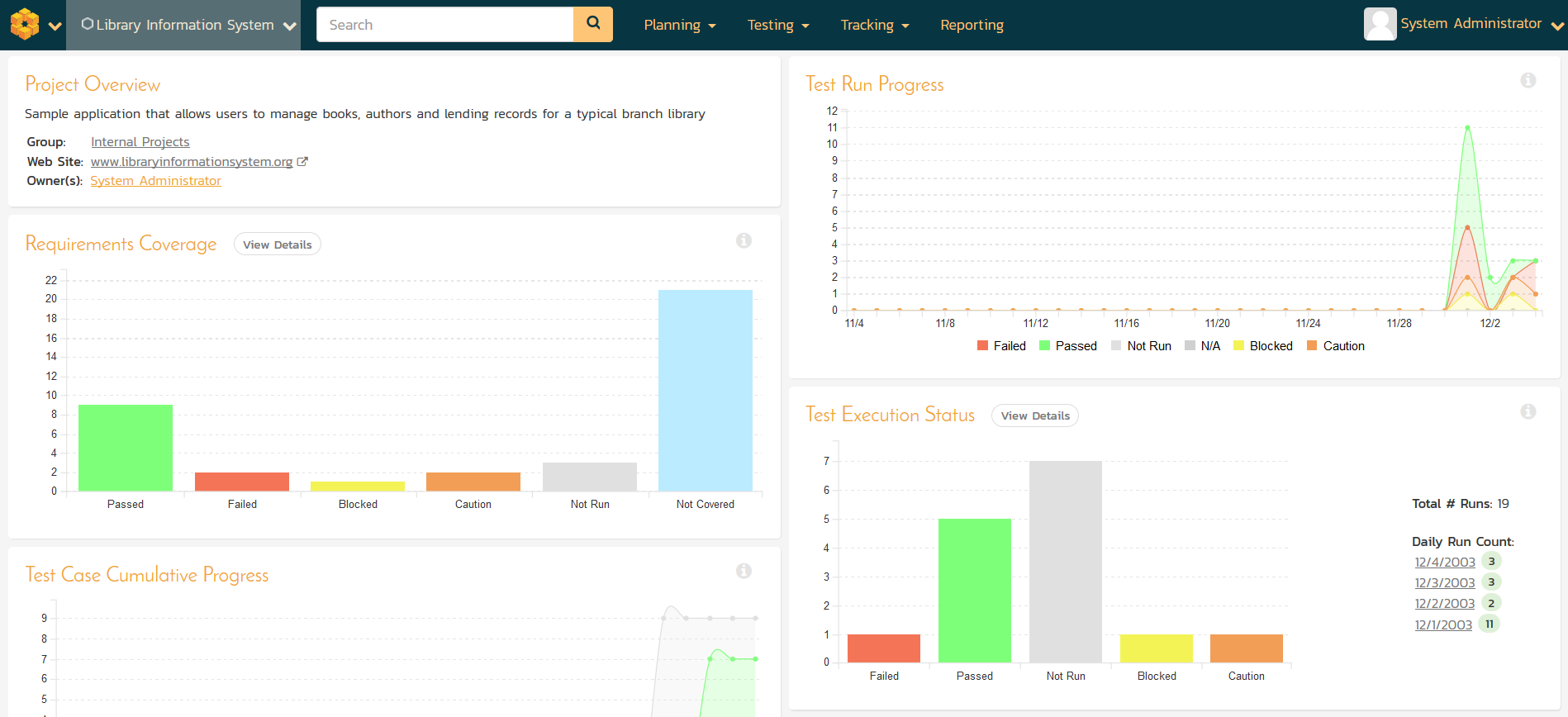

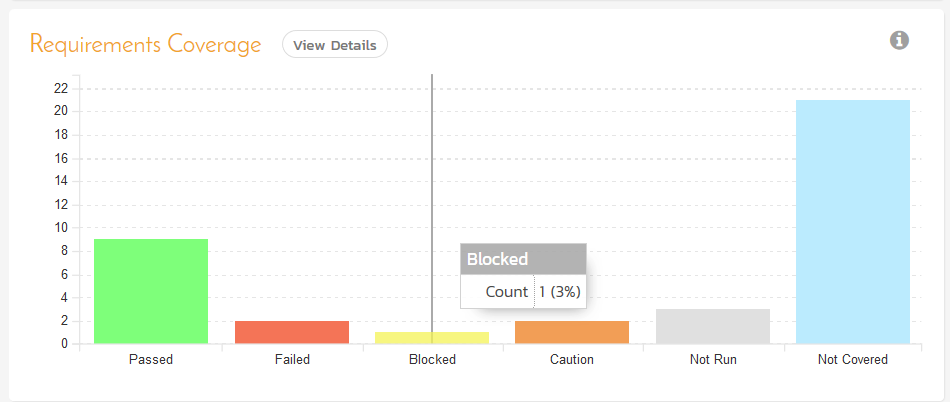

Managing the process of writing and then running tests is perhaps the first capability a test engineer will think of when it comes to a test management tool. But managers or product owners are going to think slightly differently and be more interested in the results, not how they were arrived at; test engineers are concerned about the journey, managers are interested in the destination.

Once test results have been obtained there are an enormous number of ways that the data can be used. While it may be obvious that the test results can tell you about the quality of the product being developed, it may be less clear that there are a whole host of ways the data can be diced and sliced to offer even greater insight into things such as the effectiveness of the development process and the existence of any high risk areas.

Comparing test runs can provide some of the most useful insight. A test or set of tests that repeatedly fail, point to an area of the product that needs special attention, or to an area of the development process, or even to part of the team that needs special attention. Equally, a test that has repeatedly passed, but then suddenly fails might indicate recent additions or changes to the software that should be re-evaluated as you don’t really want to undo previously attained stability. Test run comparison is also useful if you are looking at changes in defect rates per iteration in an Agile environment. Whatever type of project you are part of, comparing test runs can give you powerful trend data which can help you react quickly to emerging problems. Look for test run comparison capabilities in the tools you review.

Look for a tool that can offer not only metrics and statistics, but will help with some visibility into the possible meaning of the results. A tool that offers this information in personalized dashboards will make everyone’s life easier. Dashboards should be flexible because, as we know, different types of user have different needs when it comes to analyzing test results.

Managers often want summaries while engineers want to pinpoint anomalies or problems with specific areas of the software for which they are responsible and then drill down from there. Consider whether a tool offers more than just a default set of metrics, look for the ability to manipulate the results and present the data in user defined ways with the use of configurable display characteristics such as color and symbols. It may not be necessary for your project, but when it comes to metrics and statistics, some managers ask for the strangest things!

Figure 5 Example Dashboard from Inflectra's SpiraTeam

Finally, think about whether your tool of choice can combine data from multiple projects into a single dashboard. While we are not addressing Project Portfolio Management in this paper, to those managers with oversight of many projects, this is an invaluable way to keep on top of things. To take just one example: perhaps by comparing the test status and quality of multiple projects a manager might see that one struggling project may benefit from a reallocation of resources from other thriving projects.

Take away: Make the most of metrics. Look for tools with flexible data manipulation and configurable dashboard display options.

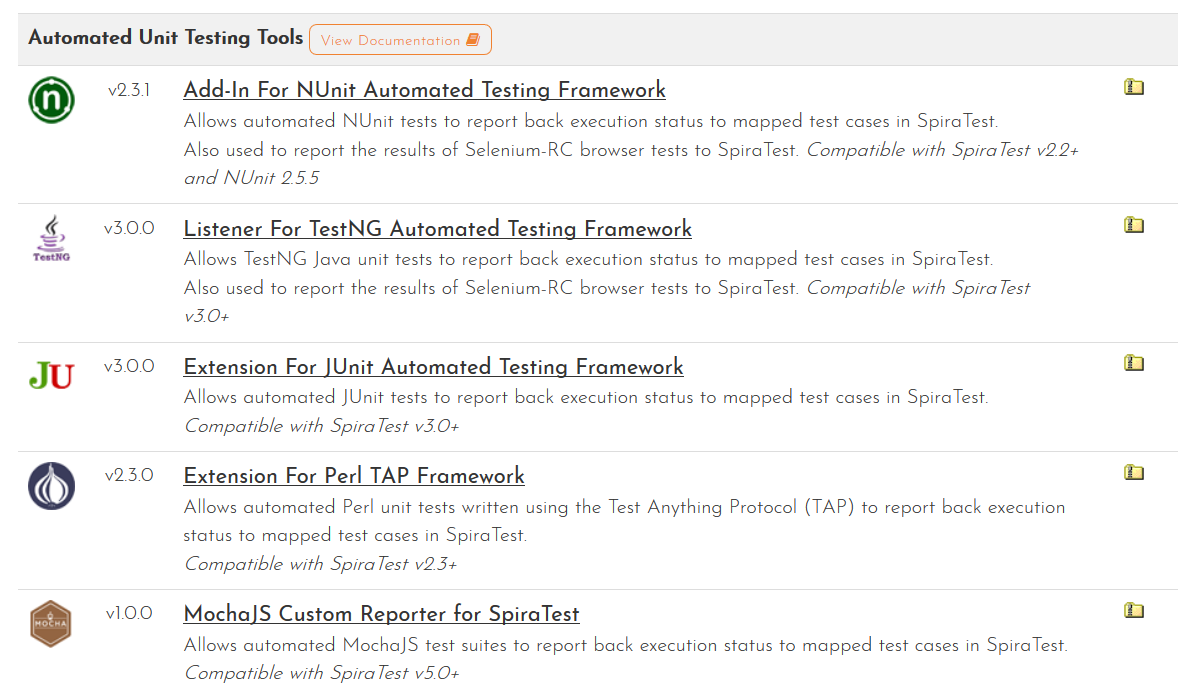

Test Automation

Testing is one of the greatest consumers of manpower in software development projects. Anything that can reduce this manual effort is going to seriously reduce the cost of overall development. Test automation saves time and effort because, unlike some project activities, it is repeated, sometimes frequently, so you would do well to consider test management tools with in-built support for automation as well as links to other tools which provide test automation such as Rapise, TestComplete, Ranorex, or Selenium WebDriver. If you plan to use an xUnit testing framework such as TestNG, NUnit and JUnit, make sure your test management tool has an integration to the one(s) you have chosen.

When reviewing test management tools with some built-in test automation, look for the ability to manage test scripts as well as schedule and launch tests both locally and on remote hosts to help with remote testing. If the test tool can pass parameters to your test cases, whether they are manual or automated tests, then score it bonus marks!

Take away: If you plan on using test automation, integrate it with test management.

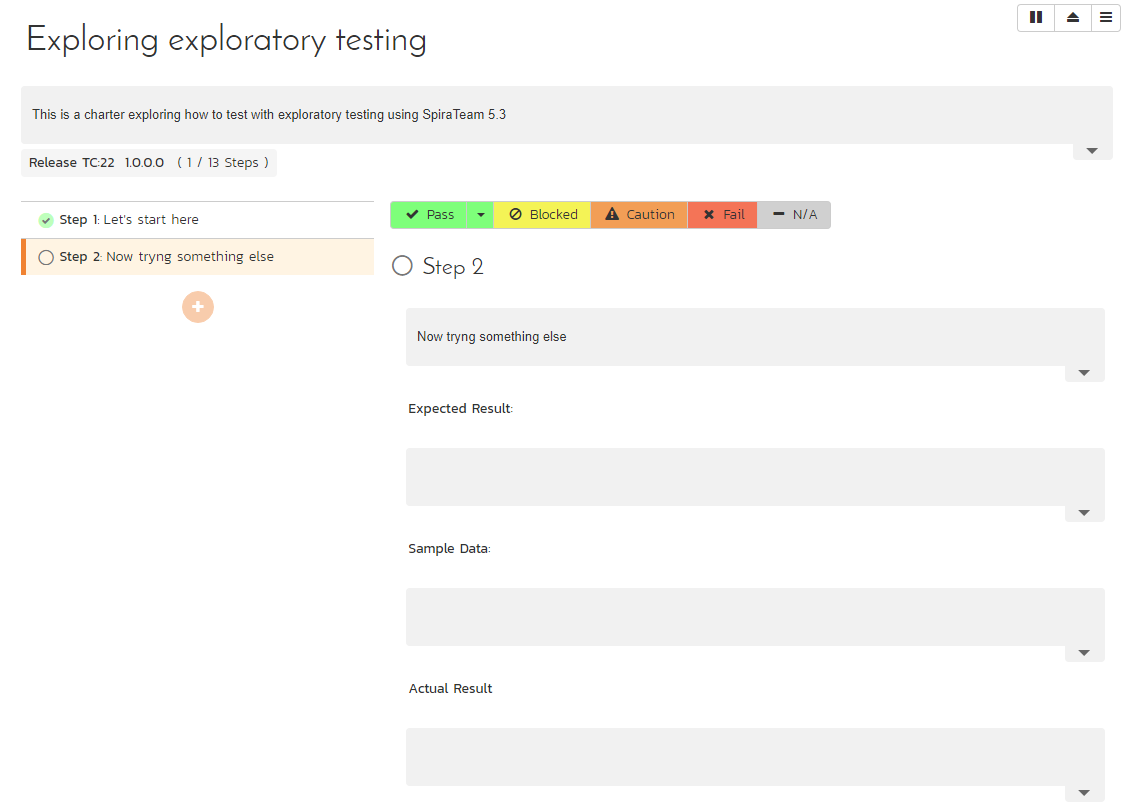

Exploratory Testing

The problem with planned testing is that it needs to be predictive. Planned functionality can, of course, be anticipated and tests created to verify it, but testing for the unexpected or testing to ensure there is no additional, unwanted functionality, is much harder to plan for. Sometimes the best technique is to simply allow the test engineer to see where his or her instinct leads.

Exploratory testing does not advocate arbitrary or ad hoc behavior on the part of the tester, but promotes less structured, experience driven testing which changes and grows as it is performed. It is also a technique to be used in addition to scripted testing, not in place of it. Consequently, the capabilities required from a test management tool for the support of exploratory testing are rather different from those of planned and scripted testing.

Look for tools that provide a means to track exactly what the tester does so that the conditions can be reproduced should a defect be discovered or in case it seems that the test should become part of the scripted test set. Capturing key strokes, screen shots and user comments provides a good way to integrate exploratory testing with scripted testing in a controlled way, especially when it comes to adding new tests to future regression testing.

If the tool is also able to provide comparative metrics of scripted versus exploratory testing, it can help you determine the effectiveness of each and where best to put additional test effort.

Take away: Explore the options that support exploratory testing and integrate that with formal testing.

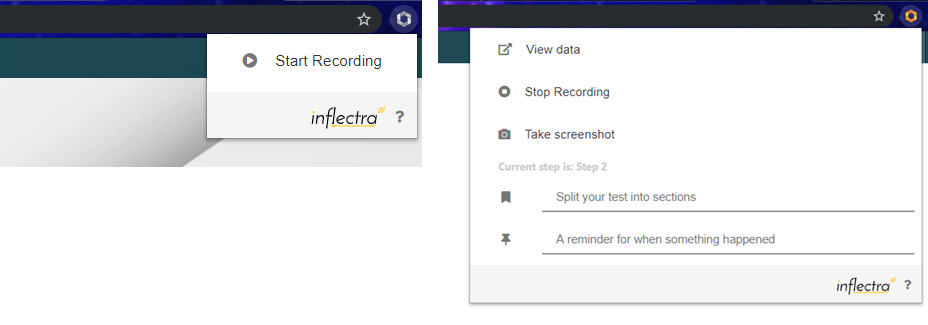

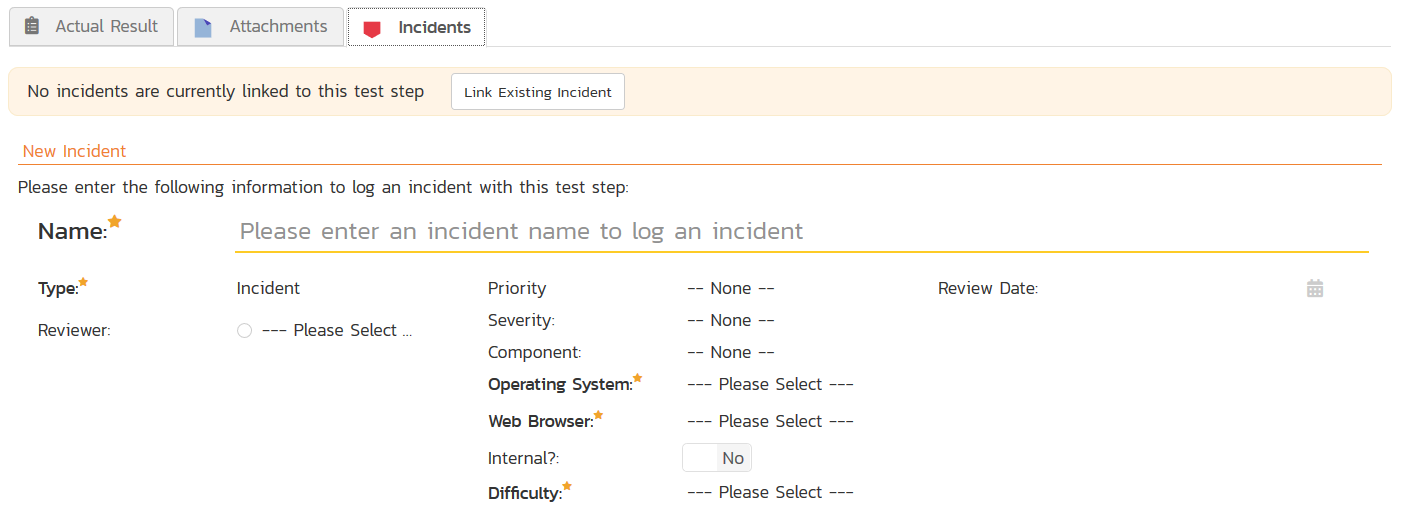

Defect Reporting

Inevitably, testing is going to result in the discovery of defects. If it doesn’t, you’re doing something wrong! How you report and track those defects relative to your tests can go a long way to helping or hurting the efficiency of your development process.

If you have a defect reporting tool that you are happy with then consider how you will use it in conjunction with your test management tool. Is there an integration between the two or will you be relying on a manual method to ensure that test information is captured along with any defects discovered? Does any integration provide synchronization or are you required to import and export data across the tool divide?

If you are not married to your current defect management system, look for a test management tool with integrated defect management. There are considerable benefits to using a tool which does both, including savings of time and effort, reduced opportunities for error, better insight into each defect for software engineers and easier closure of any issues following successful retesting. It can also be useful to link one defect to another so you might want to include linking in your tool criteria.

Although it might initially seem problematic, a hybrid solution of a test management tool with its own defect management features integrated to an external defect reporting system can provide the best of both worlds where you are mandated to use a corporate standard but need something local and immediate for your test-defect relationship. If this scenario is the best for your project, ensure the test and defect management tool can work well with your corporate standard.

When a defect is discovered, there comes a point when it will be fixed, (hopefully sooner rather than later) and then it will need to be retested and the defect closed, amended or reported once again. This is a series of tasks which make up a process. While it is not a process with a complex workflow, support for your process can be useful in test management tools which also manage defects. You might think your process is fairly straightforward, and perhaps it is, but your needs can change so look for a tool that supports a customable workflow which will not tie you into using only the process(es) supported by the tool.

Take away: If you can, look for test management in a single tool solution. If you cannot, then look for a tool with a good interface to your defect tracker. Can your tool of choice support configurable workflows?

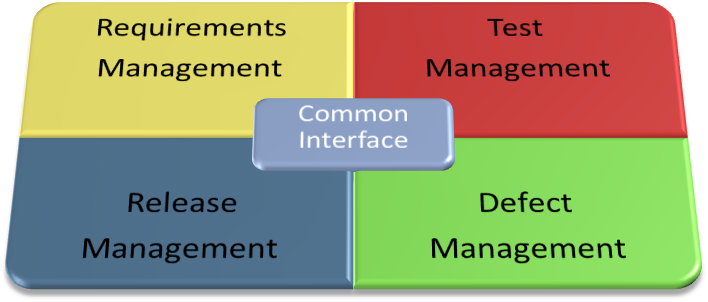

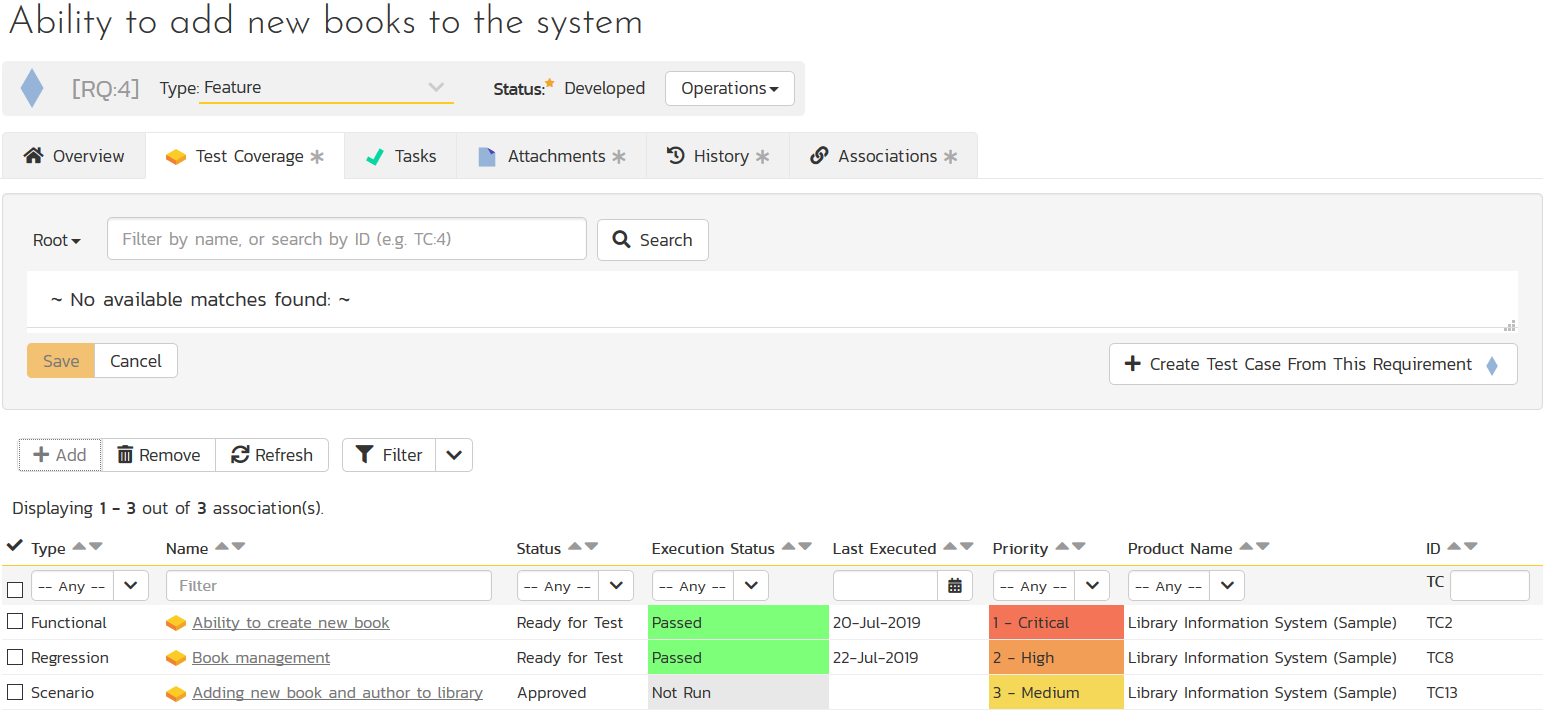

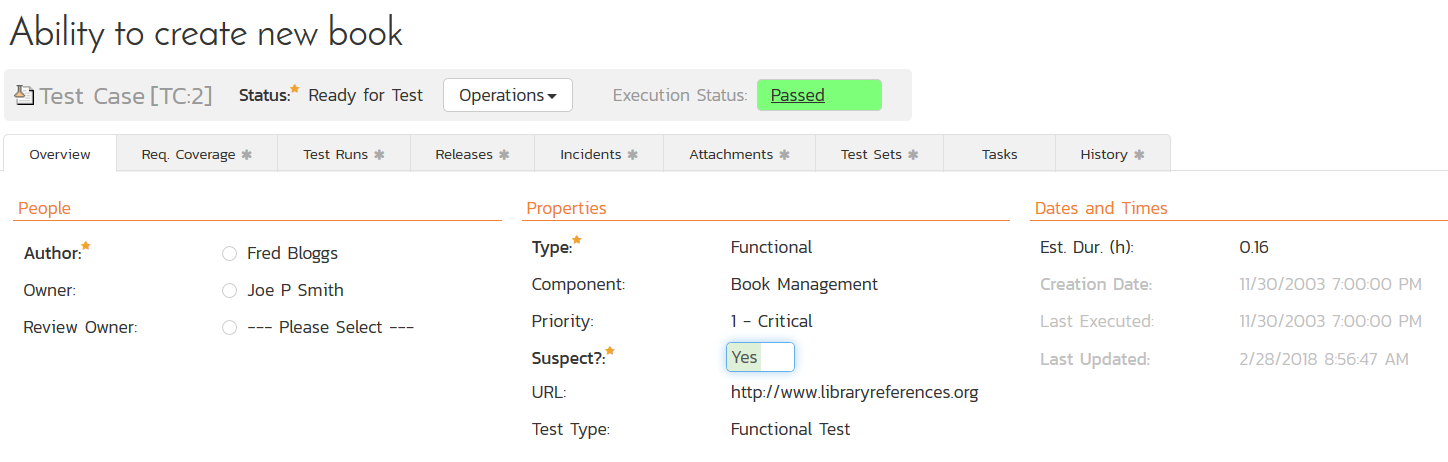

The Relationship to Requirements

A good test case is one that is a direct reflection of a piece of expected functionality. It doesn’t matter whether the expected functionality is expressed in plain written language, or as a structured use case or even as a picture, expected functionality is best defined and controlled using a formal requirements management tool. So, it is only natural that tests should be related to requirements, not just casually, but formally through the integration of requirements management tools and test management tools.

If you choose to use a single tool that manages both your requirements and your tests, you have an immediate advantage. If you don’t, you need to worry about how these tools are going to work together today, and then tomorrow should one of the tools change and become out of step with the other. It has been said that test cases are another expression of requirements, which would support the concept of using a tool that does both. But is this choice so clear cut?

One or Two Tools?

As mentioned earlier, test cases are best when related in some way to requirements. The relationship is so powerful that tool vendors have implemented a range of features to help users get more from the synergy between the two sets of data. Before looking at some of those options, let us take a minute to consider this question: ‘Is it better to use two tools, one for test management and one for requirements management, or a single tool which does both?’ If you ask the tool vendors themselves you are likely to get a rather unhelpfully biased answer which favors their own offerings. This is perhaps a little unfair, as there are valid arguments on both sides. Let’s try to consider the pros and cons objectively.

Single-function tools that provide only test management or only requirements management are, not surprisingly, very powerful in their own domains and specialist users tend to like them. This is a good thing if you have high demands in one discipline or another, but most projects will do very well without the exhaustive implementation of point products. In fact, the extra capabilities that you will never use can become a burden if they impact daily use, can increase the capacity for error and can sometimes confuse users; and that’s not considering the fact that you are probably paying a premium for those features you will never use in the first place. The problem is proliferated where the two products are not even produced by the same software company. In that case, there could be a degree of competitive tension between the vendors which is less likely to result in the kind of reliable and useful tool integration you need. Sometimes powerful tools from different vendors, while offering integrations, promote conflicting processes or use dissimilar terminology and might even have mismatched data types for the data they exchange, requiring an unsatisfactory kluge under the covers.

Figure 6: Separate Tools with Integrations

It is also worth considering that with the increasing popularity of Agile methods, specialist engineers are not quite as specialized as perhaps they once were. With team members taking on a variety of roles, tools that can do the same are finding increasing popularity.

The converse concern is that single products providing integrated test and requirements management may not offer all the bells and whistles to satisfy the particular needs of some projects; some tools began focused on one or other discipline before growing to accommodate the other, and as a result they are weak in their secondary capability. Sometimes the secondary capability is merely a token gesture in order to get the ‘check in the box’ in the customary vendor-supplied tool features list. Be careful when considering tools that started as one, (test or requirements) and then tacked on some features in order to claim support for the other. No matter how much they evolve, there are usually tell-tale signs of their original bias which prevent them offering a well-rounded solution. If you prefer the single-product solution, look for a vendor that set out from the beginning to provide both capabilities in a single, integrated product. This is by far the best bet when looking for a strong relationship between your test cases and requirements, and in the end, why wouldn’t you want that?

Figure 7: A Single Tool Solution

Take away: Single discipline tools can be powerful, but leave a lot to be desired when it comes to the test-requirements relationship. Combined test-requirements tools are better if they started life that way.

Creating the Test to Requirements Relationship

Because there is a strong relationship between requirements and tests, some products offer the ability to generate test cases from the requirements themselves. The sophistication of such capabilities varies, but even when it simply provides a stub for later elaboration, this can be a great help, especially when the link between the requirement and the test is generated automatically at the same time as the test creation. But there is also a danger here. One of the powerful features of traceability, whether it be requirements traceability or test traceability, is the power to highlight the absence of links. Ideally, you are looking for 100% test coverage of the requirements and a report of the requirements with no links to tests is a valuable commodity.

(Note here the need to search for, or filter on, types of relationship or relationships to data with specific data types, e.g. a requirement may have a link to a design element, but that link should not prevent us identifying it as having no links to a test case).

If you are using the ability to auto-generate tests and the links to them, this may invalidate any report of requirements with missing links. This can be overcome by initially labeling the link as ‘incomplete’ or if you cannot label links, labeling the test similarly. However, the query becomes more complex; no longer just looking for requirements with no tests, you are looking for requirements which do have related tests, but tests that have certain attribute values; something not all tools may be capable of.

Hopefully, test authors are aware of which requirements they are aiming to verify with a test and therefore it should be their responsibility to create the relationships between the tests and those targeted requirements. It makes sense to prevent test engineers from changing the requirements, but be sure that that doesn’t also stop them from creating the links from the tests back to the requirements. Because Agile processes somewhat blur the lines between traditional roles, any conflict over requirements and tests, especially over the relationship between them, should be lessened and the concept of team ownership can bring together the requirements and the test disciplines so that they are thought of as two aspects of the same solution. Consequently, tools with combined requirements and test management functions have a considerable advantage in Agile projects over those that offer them in separate and distinct software packages.

Take away: How can relationships between tests and requirements be created and controlled.

Using Relationships to Tests

We have already discussed how reporting the absence of links provides useful information, but there are benefits to be gained from the test-requirement relationship when it does exist, therefore tools that can take advantage of those links and are able to perform sophisticated queries on data related to the tests, as opposed to queries on the tests themselves, can be a powerful ally in the struggle for improved productivity.

Links can be categorized in many ways. Do you mean decomposing versus focusing? Do you mean ‘satisfies’ versus ‘is a child of’, types of link? Or is there some kind of link that SpiraTest supports explicitly between requirements and tests?

Perhaps the most obvious indirect information that should be provided is notification of the change to a requirement. Changing requirements are the greatest enemy of stable tests so requirements change notification is a very valuable asset. As discussed previously, change notification will help testers understand when their tests might be stale, and the links to requirements are crucial to obtaining that information.

(Example screenshot of SpiraTest showing the Suspect flag that gets set if a requirement changes)

Another example is requirements volatility: if a requirement is frequently changing, it may be prudent to pay particular attention to the tests related to that requirement as the code is unstable. Similarly, a small number of tests that are related to a large number of requirements should raise questions. Do you have sufficient test coverage for an area that appears to be somewhat complicated? If you are on an Agile project, are you performing these critical tests sufficiently frequently during each iteration?

Similar information can be gained from the relationship between tests and defects. We have already discussed the relationship between testing tools and defects management systems, but it is worth mentioning that once again, the number of links is important; in this case it is a direct reflection of the quality of the software system in a specific area, which consequently needs more attention.

In summary, do not underestimate the power of secondary information related to tests over and above the test data itself and look for a test management tool that has the power to query that secondary information and derive useful insight from it.

Take away: Notification of requirements change is powerful. Volatility and quality measures can be derived from tools with link analysis.

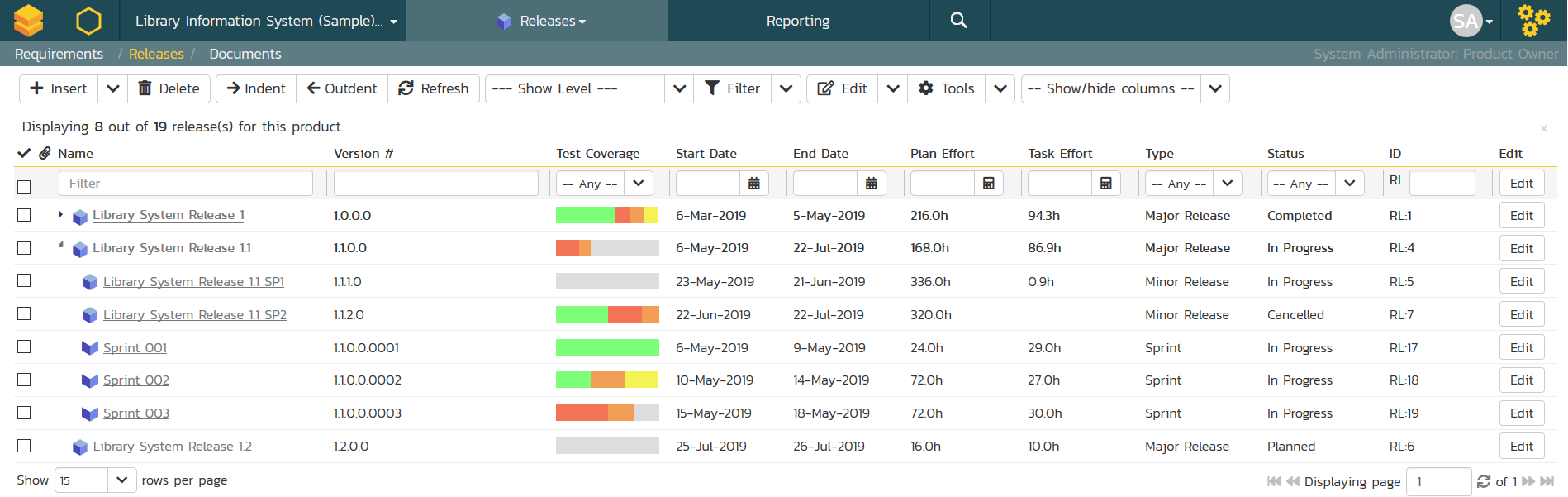

Release Management

Every release must be tested; that’s obvious. All regression tests should be run, along with testing for new or amended functionality. In practice there are methods to reduce the required testing for each release but regardless of the amount of testing you do, it is important to manage the release information in concert with the testing being done.

When the same test is performed against two releases, one with a pass result and one with a fail result, it is critical that you know which release each test applied to you and which release you are working to fix when following your defect resolution process. Without this capability, test management tools will leave you with considerable manual work to do in mapping test runs with releases: a considerable task. If your test tool can map requirements as well as tests to releases, then it will give you a full picture of what is included in that release and whether there have been any incidents raised against it.

Relating requirements, test and defects to releases is particularly important when doing an iterative type of Agile development. There are going to be frequent releases, (not all of them necessarily public releases) and frequent testing. Some of the non-public releases may be considered steps towards the public release and so arranging releases hierarchically makes a great deal of sense. To manage this all effectively without some form of tool support will give even the best project managers some sleepless nights.

Take away: Links to release information completes the picture of tests, requirements, defects (or incidents) and releases.

How Do the Tool Vendors Do Test Management?

It would be safe to assume that the vendors of test management tools, actually test those tools! Which would leave one wondering what they use for test management. Not only should you ask that question, but you should ask how they use their product. Do they use the tool fully, or do they supplement it with other software? If the answer includes anything similar to, “Well, we have rather different needs than most,” then perhaps their tool is not for you. A lengthy explanation of how they do things or how they are using their tool in an unorthodox way, well, it’s simply not encouraging. Every organization likes to think their operation is somehow unique, but in truth, the differences are marginal and a vendor's description of how it uses its own tools should be straightforward and easy to understand.

As with all software vendors, look for one with whom you feel comfortable. If something goes wrong, or you simply need help understanding something, are they going to be there on the other end of the phone or are you going to enter the support labyrinth of a vast multinational, from which you may not escape? There are advantages to working with very large vendors, but customer support is not always one of them.

Summary

Before choosing any new product, be sure you know what you need. If you haven’t defined your requirements, you are not ready to move forward to the next phase! Do you want to consider individual point solutions for each project development discipline which may please your subject matter experts or should you look for a united approach that is more likely to get everyone working on the same page. Don’t spend too long evaluating all the common capabilities, look instead at the differences which help a product standout from the rest. And don’t just listen to the vendors, ask for a list of similar users and talk to them. Finally, ask for an evaluation. The evaluation process alone, separate from the tool usage experience, will tell you a lot. Don’t buy a car without a test drive!

1) SpiraTest is a trademark of Inflectra Corporation

2) HP QuickTest Professional, HP Service Test, and HP Quality Center are trademarks of Hewlett-Packard Development Company, L.P.

All other trademarks are the property of their respective owners.