Automated Testing with Rapise

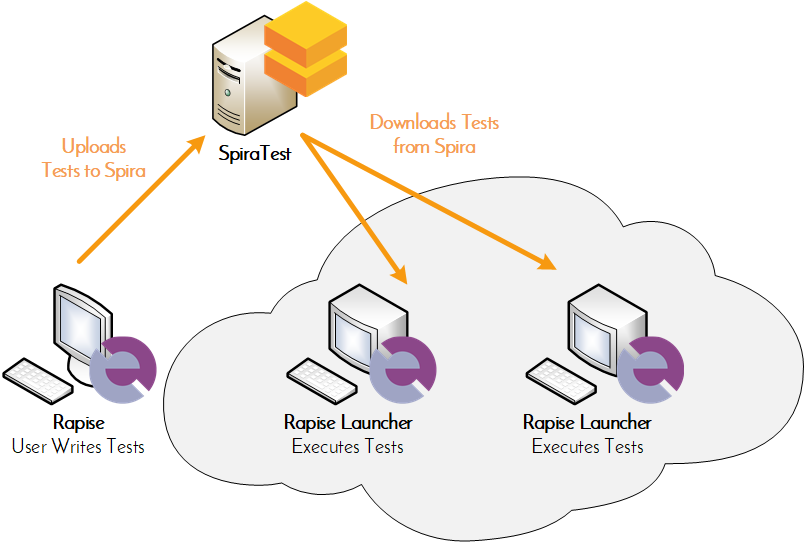

Rapise is our powerful test automation tool that is designed to work seamlessly with SpiraTest. Rapise can test web, mobile, and desktop applications as well as APIs. You can store, manage and version your Rapise test cases within SpiraTest and use RapiseLauncher to execute the tests on a globally distributed test lab.

Use Other Automation Tools with RemoteLaunch

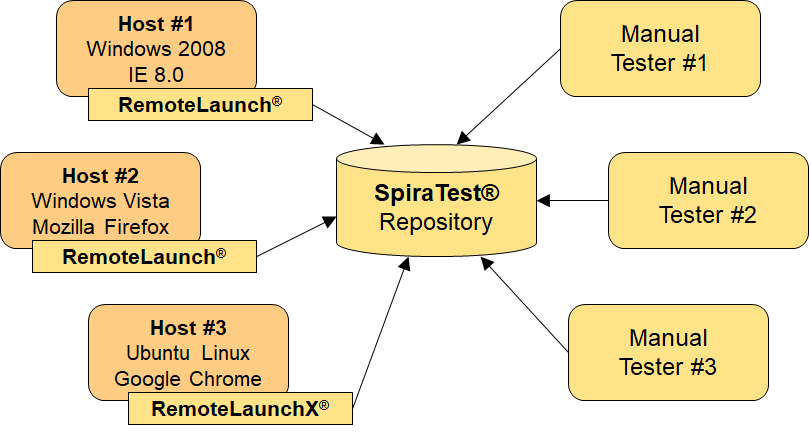

Automated test scripts are a valuable way to perform regression testing on applications to ensure that new features or bug fixes don’t break existing functionality. You can use RemoteLaunch with SpiraTest to manage the automated testing process using different tools from different vendors, all reporting back into your central SpiraTest instance:

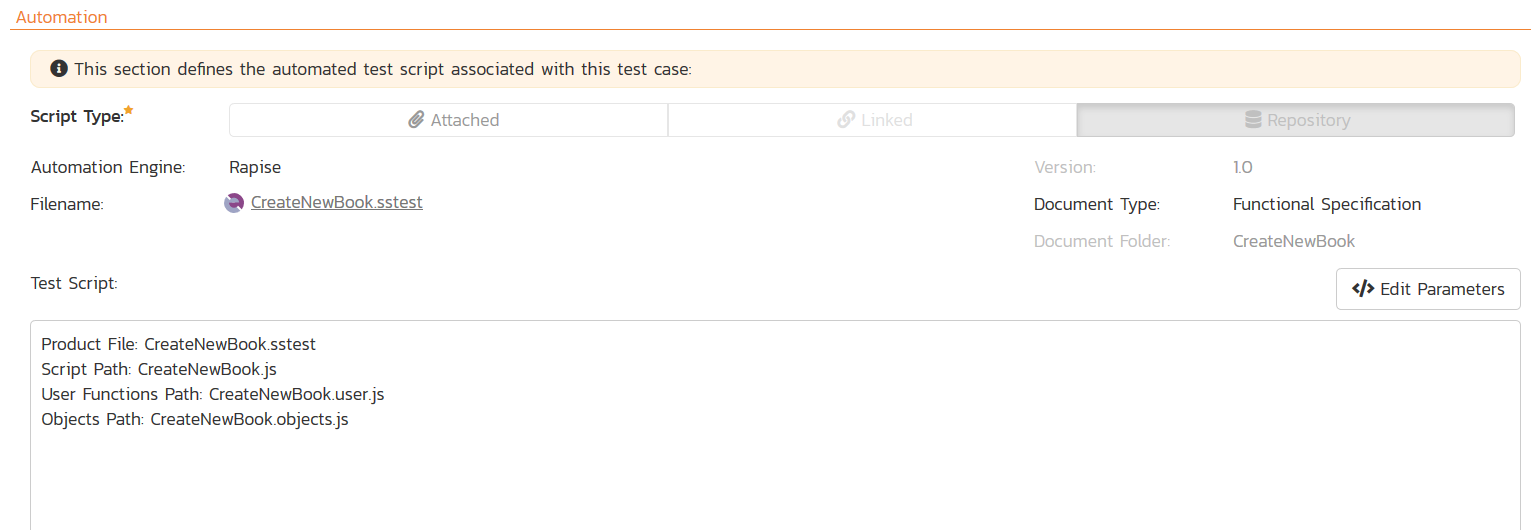

Storing & Versioning Test Scripts

You can store and version your automated test scripts inside SpiraTest. SpiraTest supports a wide variety of test automation engines (both commercial and open-source) including Rapise, UFT, Ranorex, TestComplete and Selenium.

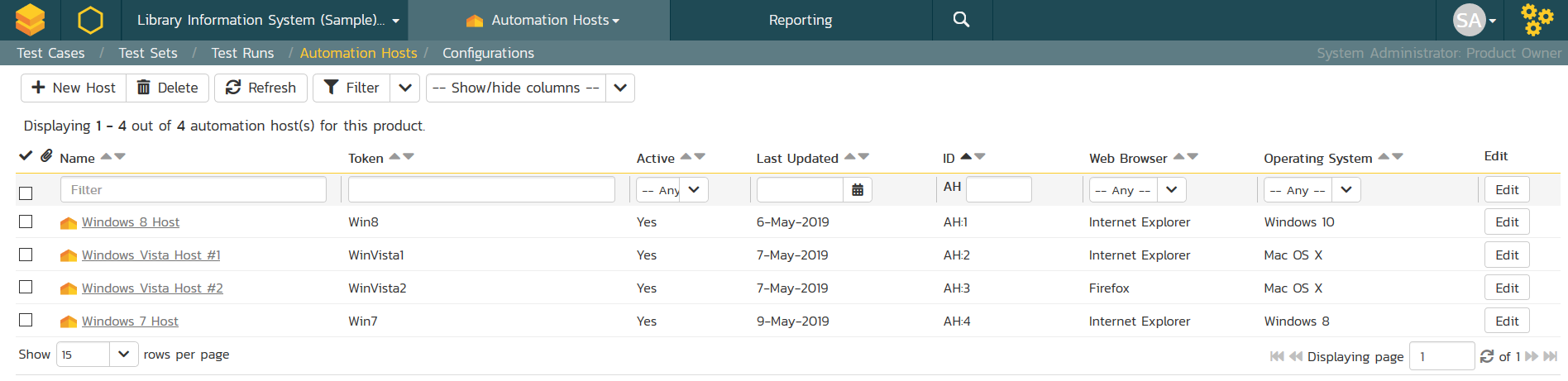

Managing Automation Hosts

The automated test scripts managed in SpiraTest can be either executed on the local machine or scheduled for execution on a series of remote hosts. Using either Rapise or RemoteLaunch, you can manage an entire global test lab from a central SpiraTest server, with test sets being executed using a variety of different automation technologies 24/7.

Test Scheduling & Monitoring

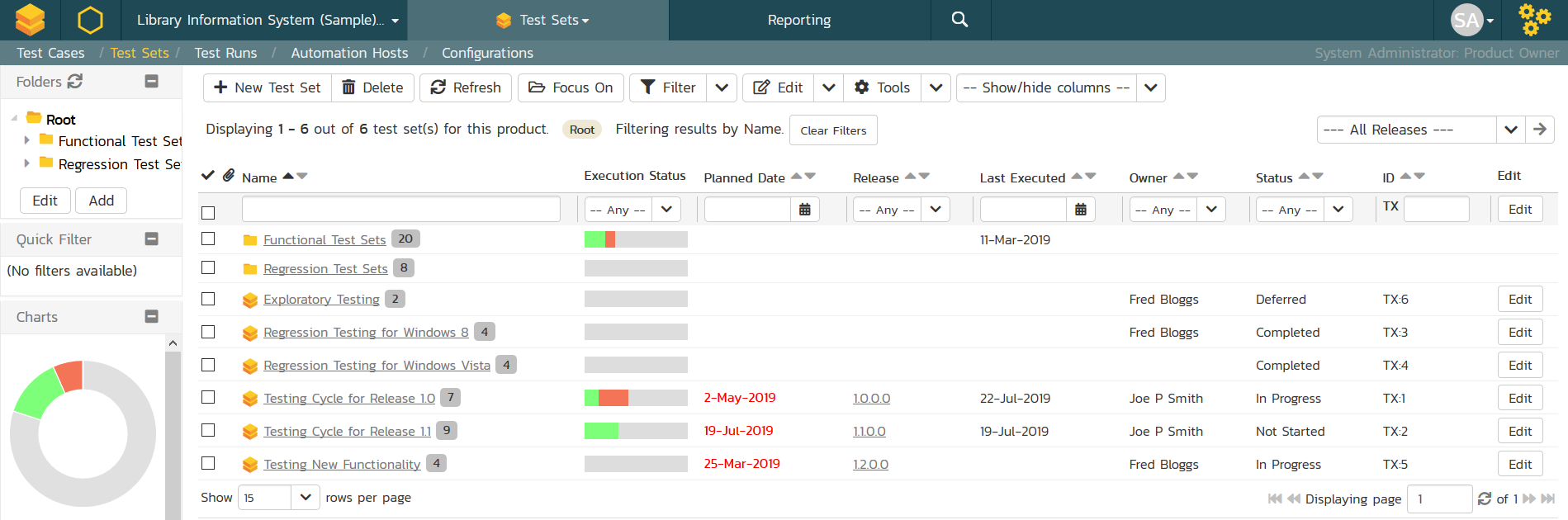

You can organize the test cases into test sets, which are assigned to specific automation hosts for execution. You can either assign unique host names to each computer or use the same host name, in which case SpiraTest will simply use the first available machine.

With SpiraTest managing your automated testing you can see at a glance the execution status of each test set in one consolidated view:

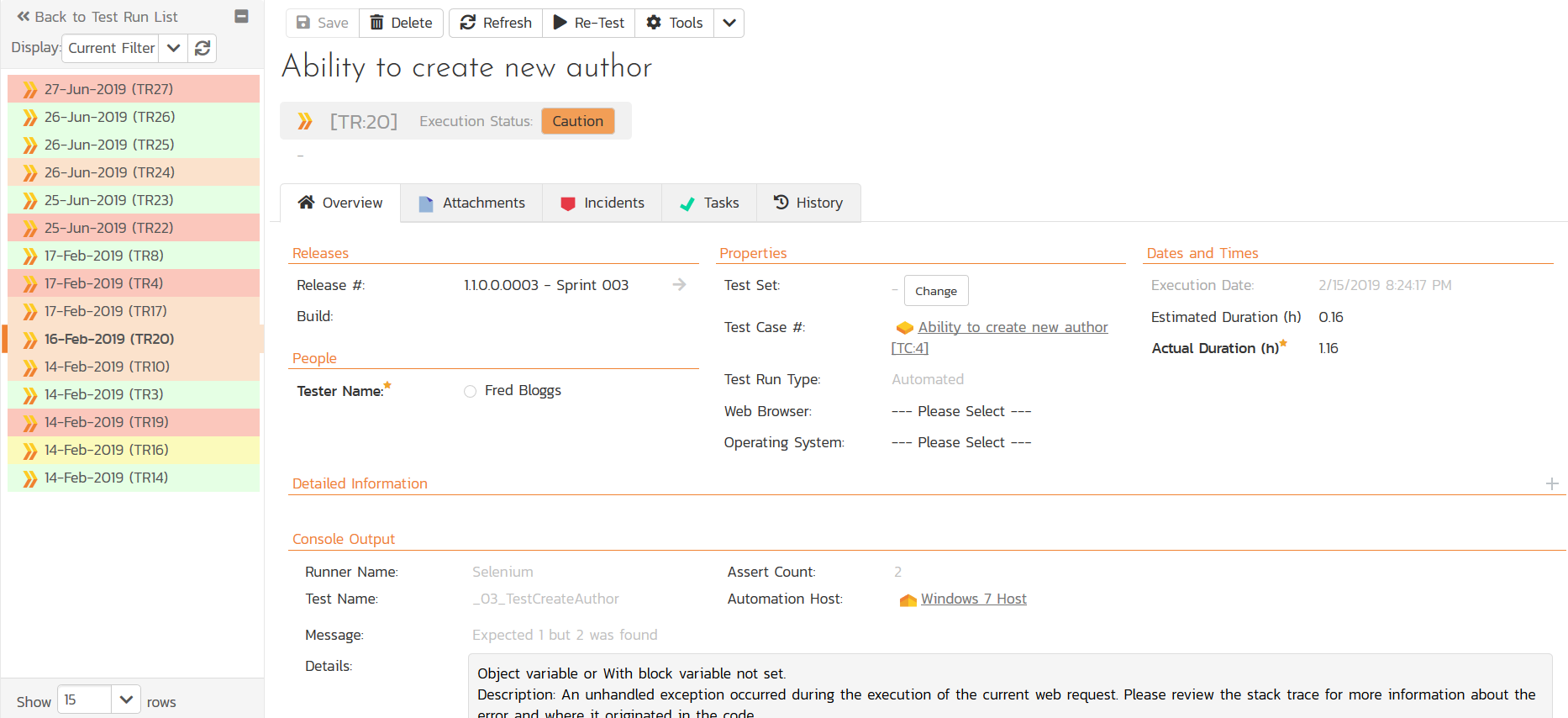

When the automated tests fail, you can drill down to the individual test run to get the complete record of what passed/failed including any screenshots or log files captured:

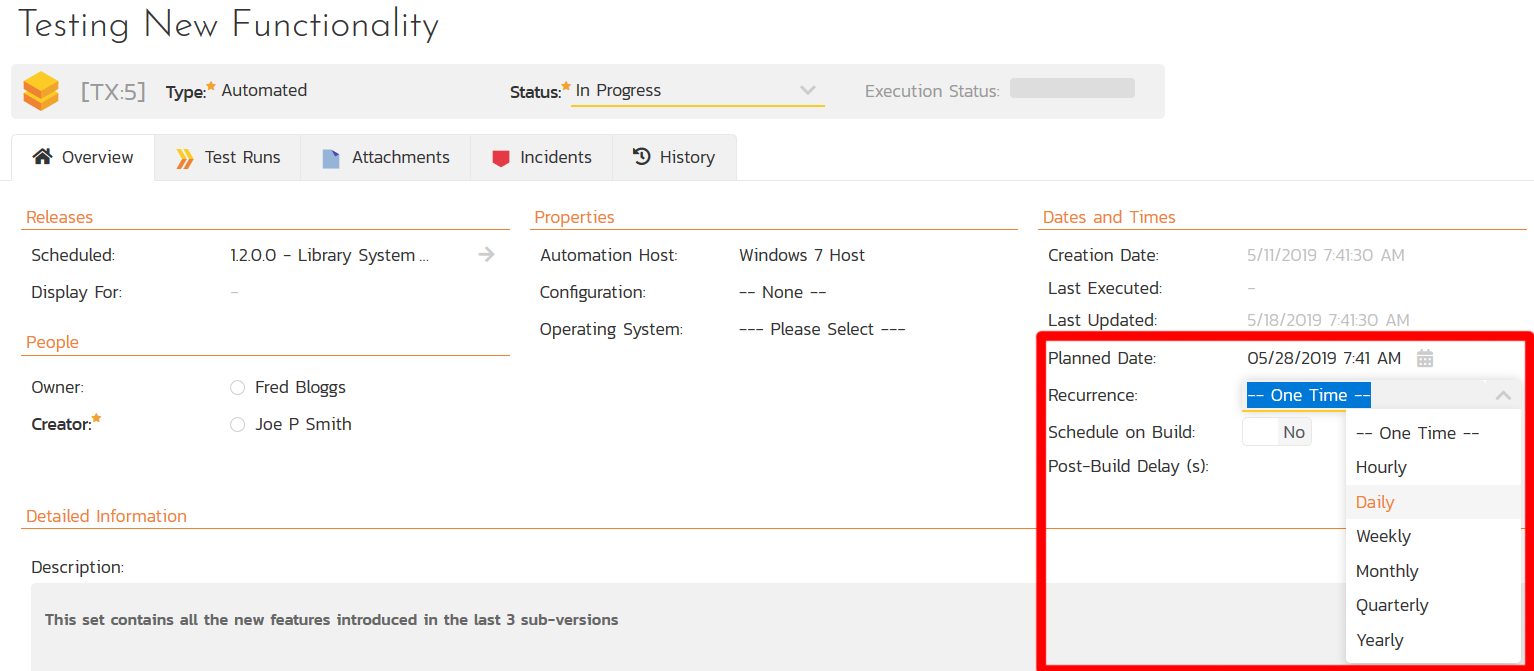

You can schedule the tests to run either just one time, or use the option to setup a recurrence schedule, where the same tests will run every hour, day, etc.

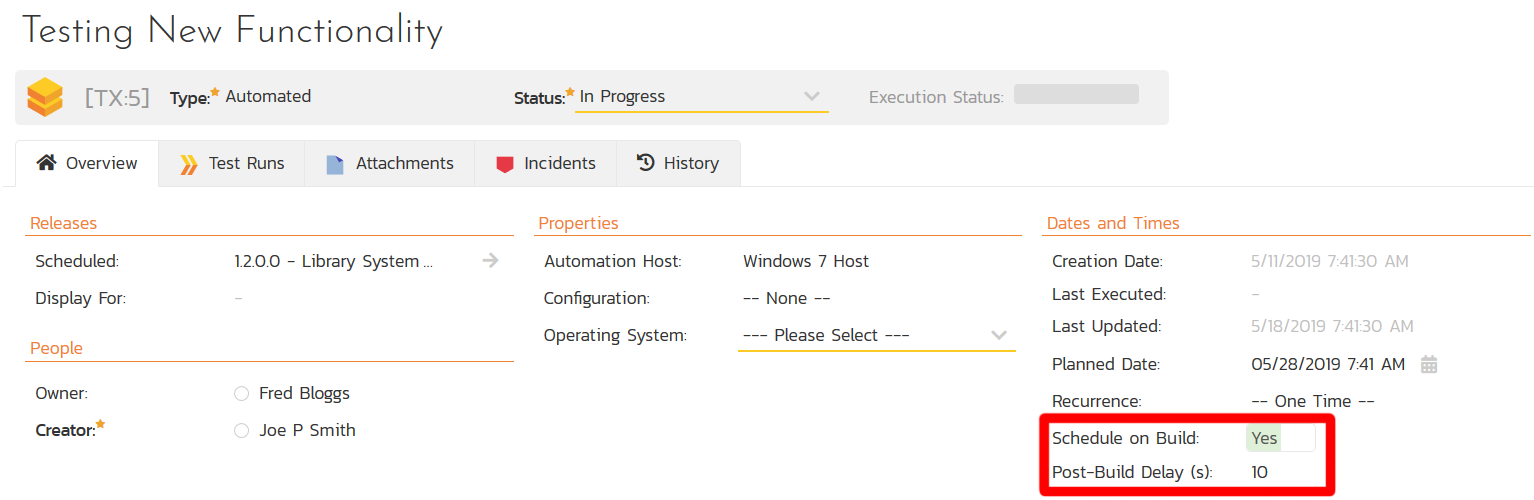

Furthermore, if you are using a Continuous Integration pipeline such as Jenkins, when you use the SpiraTest plugin, you can have the test set ‘auto-scheduled’ to run every time the pipeline completes and the build is successful:

Data-Driven Testing with Test Configurations

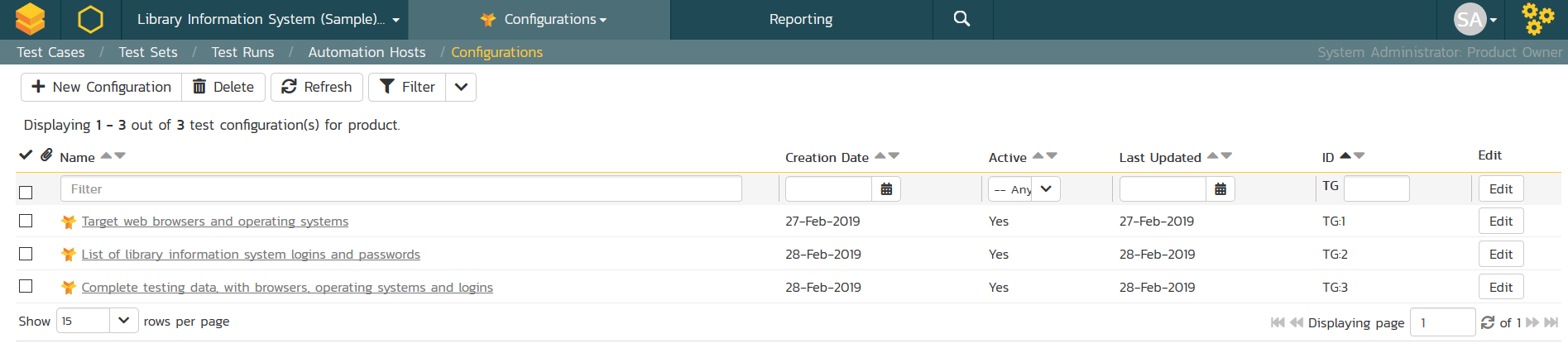

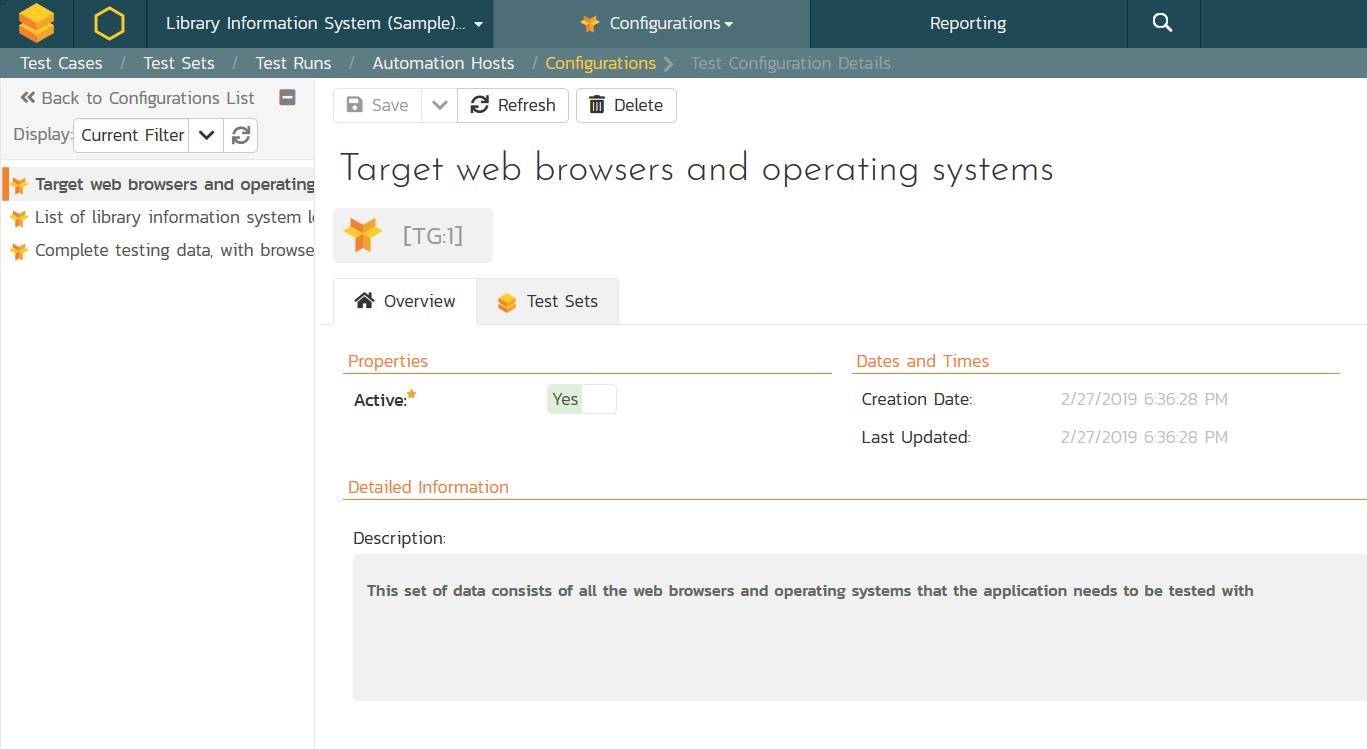

The Test Configurations module in SpiraTest lets you create different 'test configuration sets' which are used to store different combinations of test data that are to be used during testing:

You can create as many different test configuration sets as you need, and each of them defines the actual test data values that will be used for a specific testing activity:

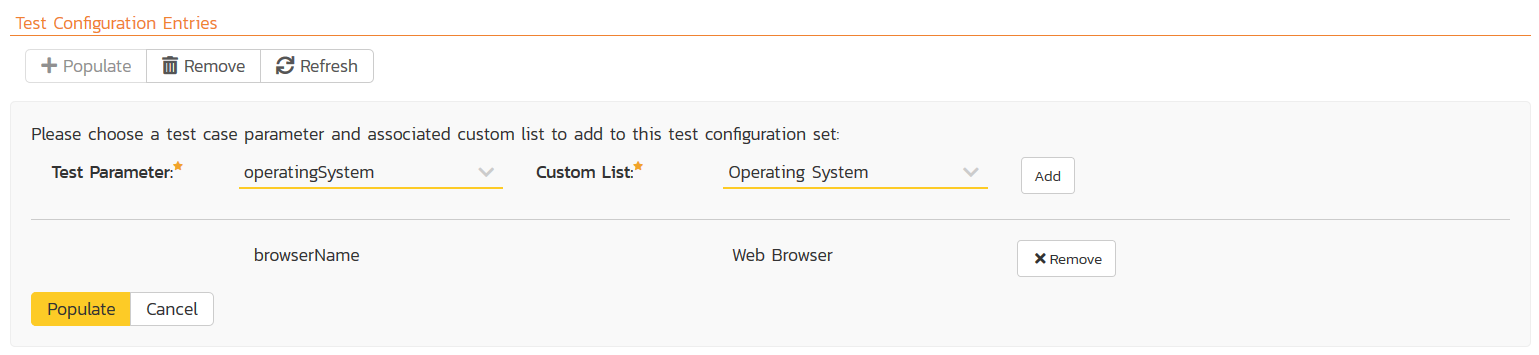

Once you have chosen one or more test case parameter and custom list, you can then tell the system to use that to populate the test data grid:

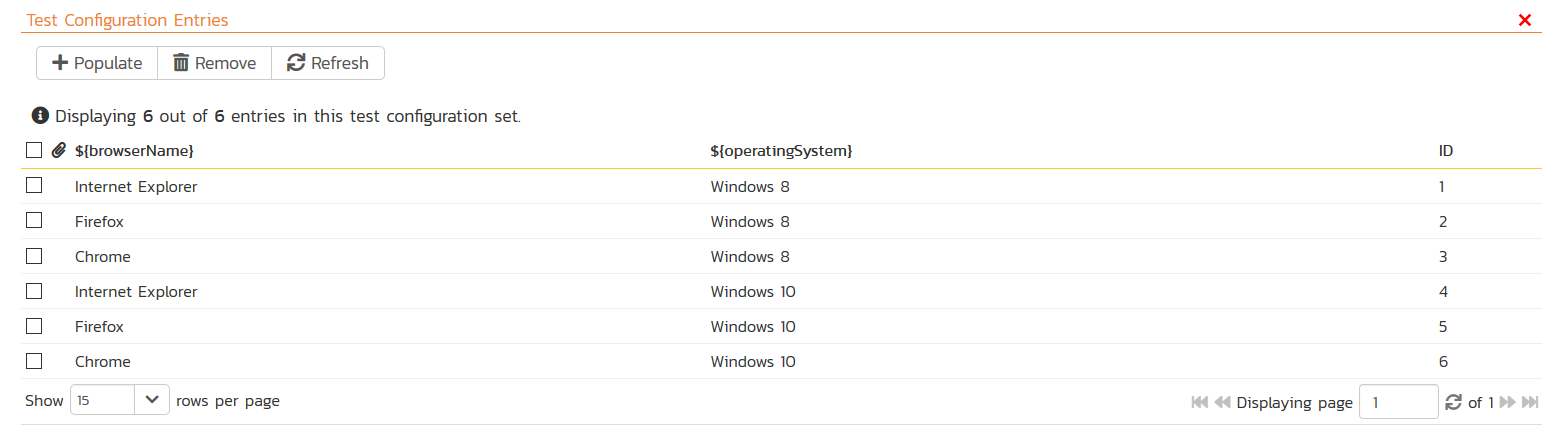

The system will then generate all the possible combinations of these values:

Then you can remove any of the items that don't make logical sense (e.g. Microsoft Edge and Mac OS or Safari and Windows). You now have a defined set of valid test configurations entries that make up the set.

You can now assign any of your test sets to use this data grid and SpiraTest will iterate over all the valid combinations and run your test set multiple times, once for each combination. This lets you perform large-scale automated testing with a central set of test data.

Try SpiraTest free for 30 days, no credit cards, no contracts

Start My Free TrialAnd if you have any questions, please email or call us at +1 (202) 558-6885