Test Management Integration

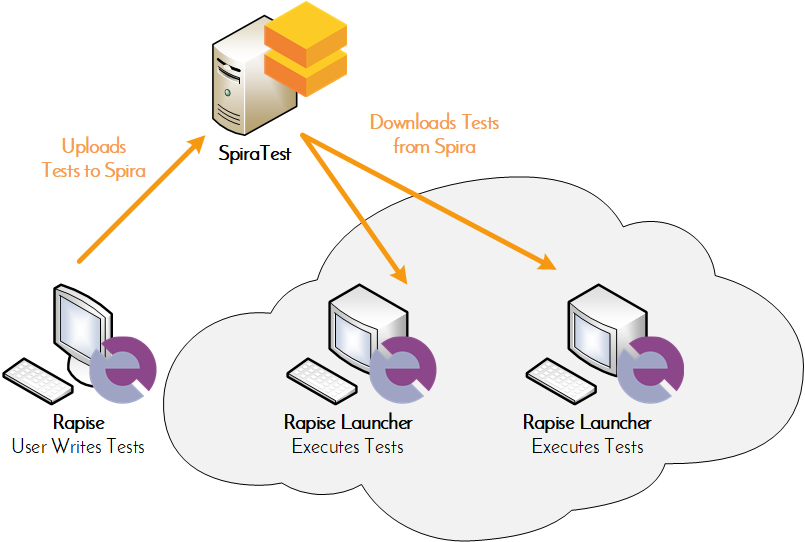

SpiraTest provides an integrated, holistic test management solution that manages your requirements, tests and incidents. When you use Rapise with SpiraTest, you can centrally manage your automated tests and remotely schedule and launch them in a globally distributed test lab.

Overview

SpiraTest is a web-based quality assurance and test management system with integrated release scheduling and defect tracking. SpiraTest includes the ability to execute manual tests, record the results and log any associated defects.

When you use SpiraTest with Rapise you get the ability to store your Rapise automated tests inside the central SpiraTest repository with full version control and test scheduling capabilities:

You can record and create your test cases using Rapise, upload them to SpiraTest and then schedule the tests to be executed on multiple remote computers to execute the tests immediately or according to a predefined schedule. The results are then reported back to SpiraTest where they are archived as part of the project. Also the test results can be used to update requirements' test coverage and other key metrics in real-time.

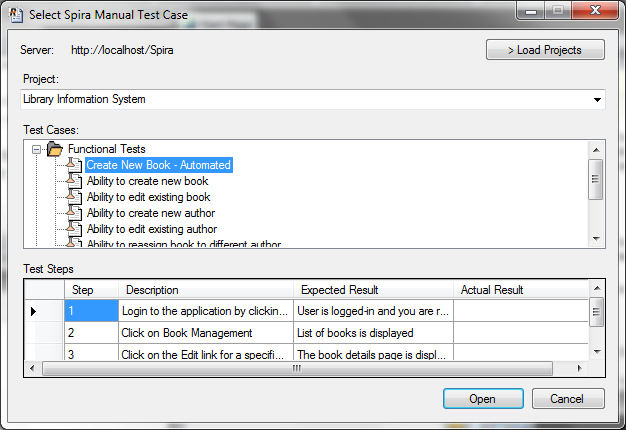

Creating Automation Scripts from Test Cases

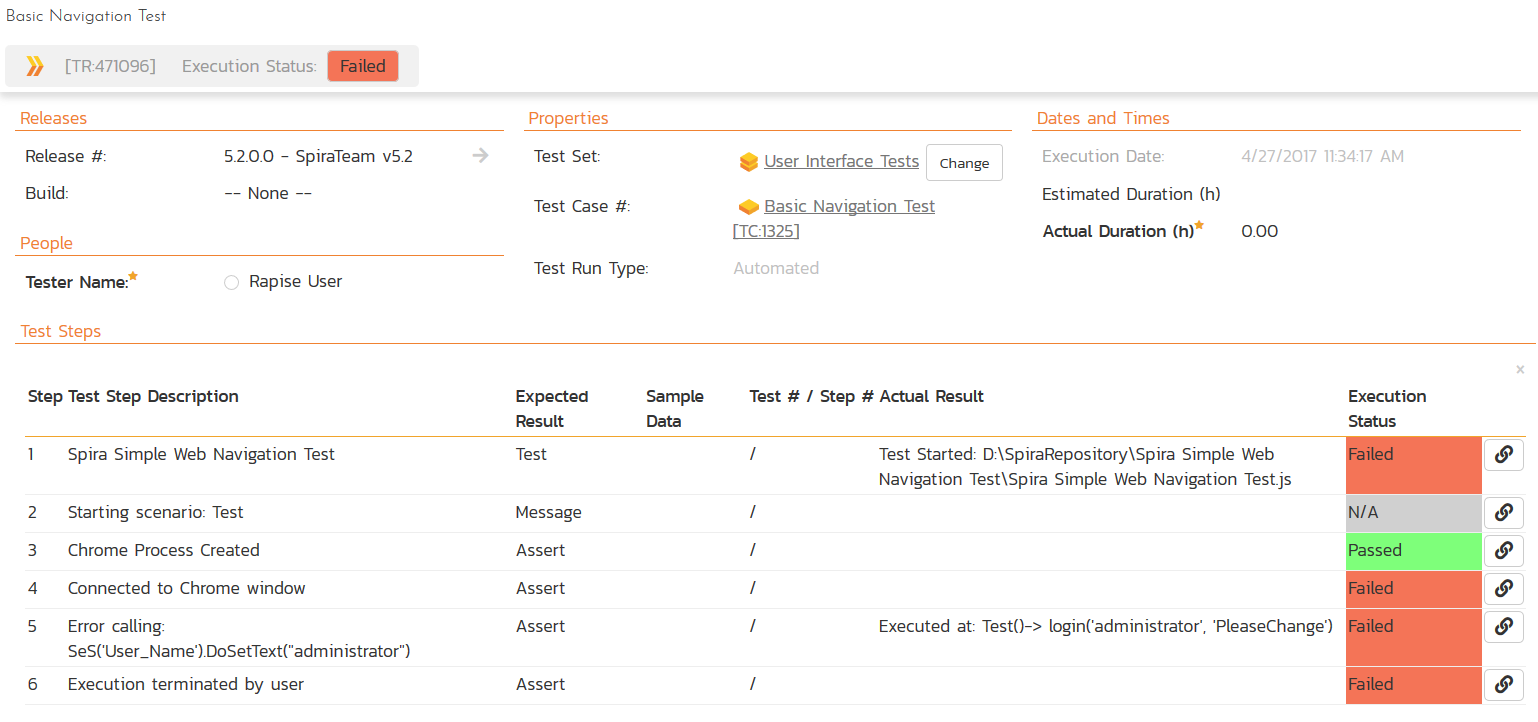

Rapise can create new automated tests directly from your existing SpiraTest manual test cases. This powerful feature allows you to ensure that your automation script will encompass all the necessary functionality and fully tests the requirement(s) being covered. When you execute these automated tests, Rapise will be able to report back the results at the test step level, providing highly specific feedback to your developers and testers.

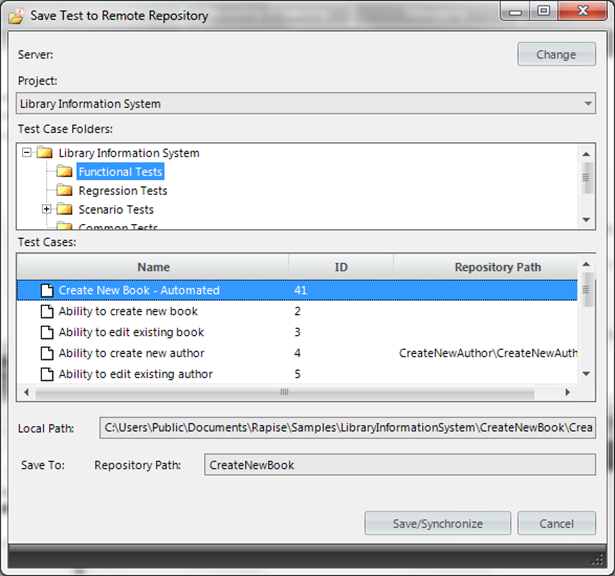

Storing Rapise Tests in SpiraTest

Once you’ve connected your Rapise installation to SpiraTest, you can easily store versioned copies of your automated framework, linked directly to the corresponding test cases in SpiraTest. You can manage this seamlessly through the Spira Dashboard embedded right in Rapise. The best part? It automatically synchronizes Rapise Test Cases and Test Sets with their respective artifacts in Spira, keeping everything in sync and organized without any extra effort.

This allows you to centrally manage your Rapise automation scripts, making it easy for multiple testers and developers to collaborate. Every time you save a test, Rapise checks the versions stored in the central repository and automatically determines which files need to be uploaded or downloaded. This ensures your workstation stays perfectly in sync without any manual hassle.

Executing Rapise Tests from SpiraTest

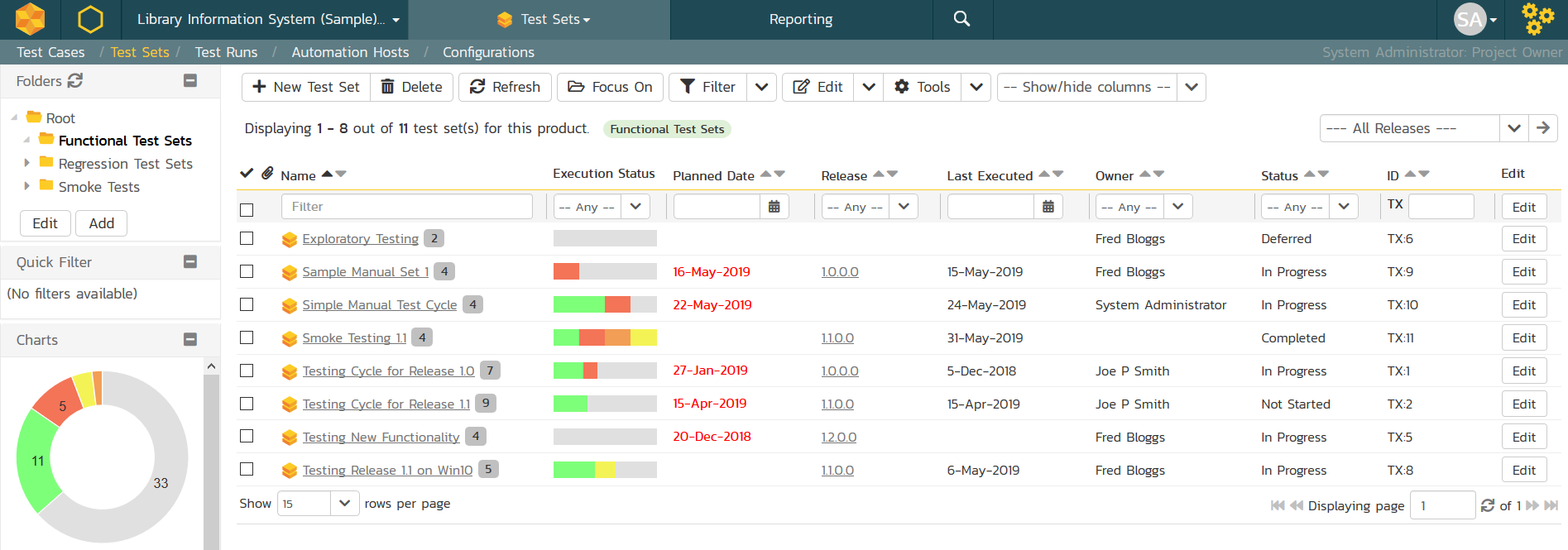

Once you have your Rapise tests stored in SpiraTest you can take advantage of the test set scheduling capabilities of SpiraTest.

Using the Automation Hosts feature of SpiraTest, you can create different test sets to run different combinations of Rapise tests on different computers at the same time. When you use the ability to parameterize the tests and pass values from SpiraTest to Rapise, you can execute the same tests on multiple platforms and/or browsers using different combinations of test data.

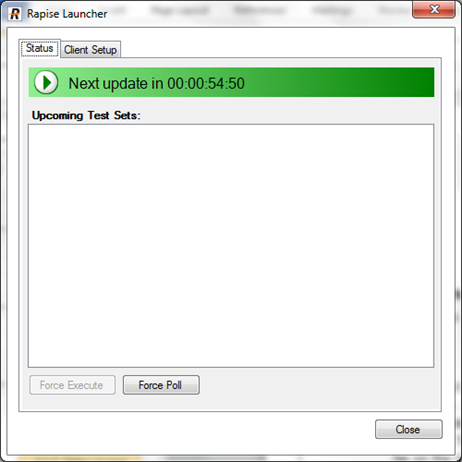

The remote execution of Rapise tests is performed using the free RapiseLauncher add-on for Rapise that installs along with the main Rapise application:

When run, the program will start minimized to the system tray and will start its polling of the server. Polling will occur every ‘x’ minutes (5 by default) for any automated test sets that are scheduled to be run. When time comes for a test to be launched, it will start Rapise to execute the test. Rapise will then perform the test activities and report the results back to SpiraTest.

At the end of the test, the program will go back and resume scanning for tests that need to be executed. Typically (unless there is a bug in the test or application being tested) no user input is ever needed from the application itself.

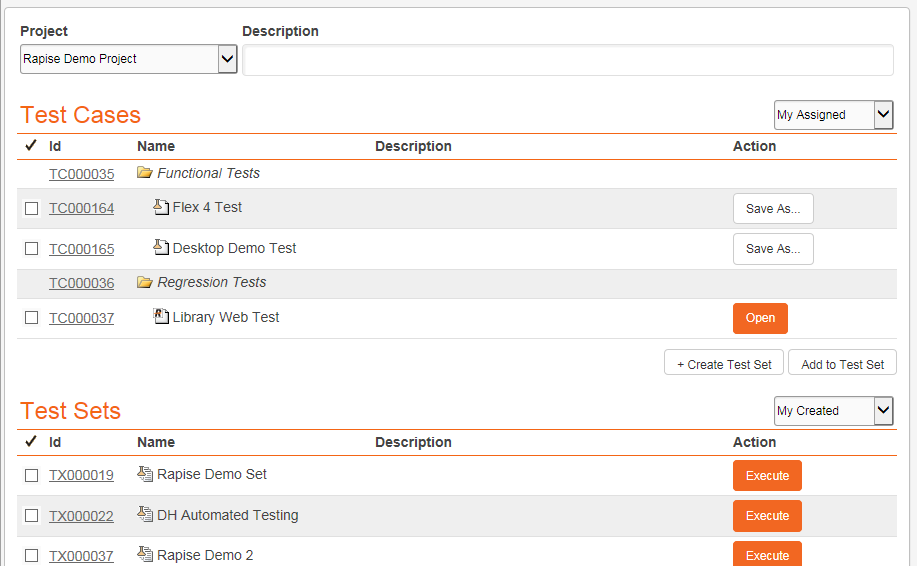

Test Management Dashboard in Rapise

To make working with both SpiraTest and Rapise even more seamless, there is a Spira Dashboard built right into Rapise:

With the Spira Dashboard, you can create and schedule test sets, view execution results right from Rapise. This convenient feature lets you synchronize local changes with SpiraTest with a few mouse clicks.

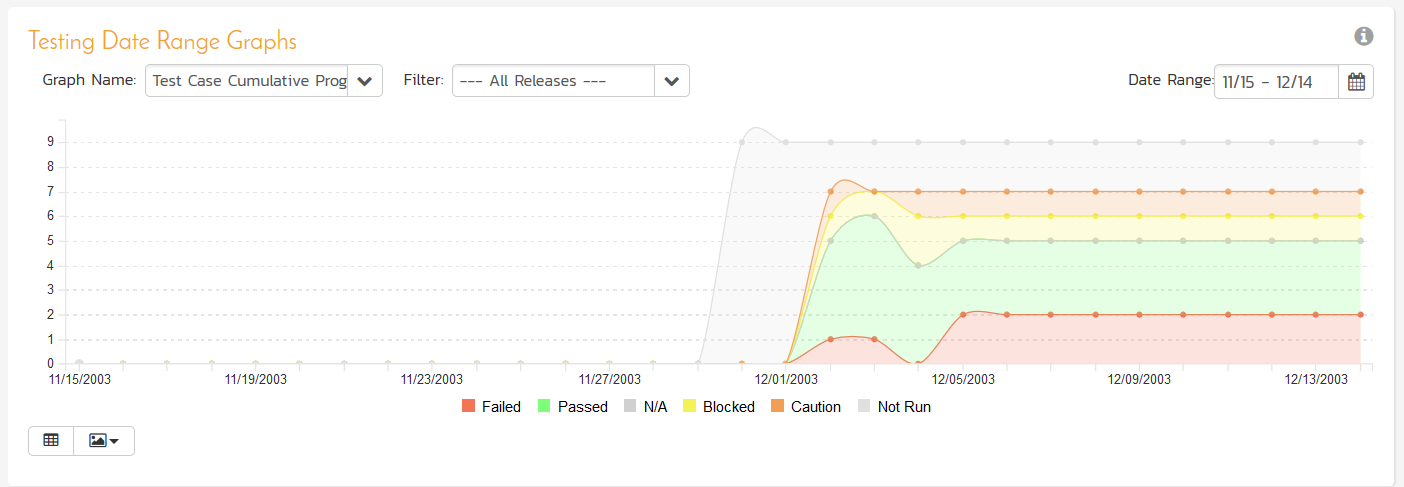

Enterprise Reporting with SpiraTest

When you use Rapise in conjunction with SpiraTest in addition to the individual test case reports that are saved from Rapise into SpiraTest, you can also view graphs and charts that show you the overall performance and progress of your testing.

When you use Rapise and SpiraTest together you can view the results of individual test executions, track metrics of testing per release, per iteration/sprint and also analyze trends across different platforms and technology combinations to elucidate trends and patterns of failure.

Try Rapise free for 30 days, no credit cards, no contracts

Start My Free TrialAnd if you have any questions, please email or call us at +1 (202) 558-6885